Handling Multilingual Sites for Humans and Search Engines

Every international SEO project must be evaluated and solved carefully according to its particularities, but there are some basics to cover if you want to ensure a smooth-as-silk experience. This is what we’re going to cover today. Given the worldwide reach of Bruce Clay, Inc., and being an international and multilingual SEO myself, this topic came naturally and is something I’m passionate about.

Combining the Best User Experience and a Perfect Indexation

Let’s look at an example of a site with different languages. We could organize it in directories like:

- http://mysite.com English, default language

- http://mysite.com/fr/ for French

- http://mysite.com/es/ for Spanish

- http://mysite.com/ru/ for Russian

My few followers know how often I argue against languages in subdomains when country level domains (ccTLDs) are not an option, but this is another story.

What I propose here will also work for languages organized in subdomains or ccTLDs. There is a double objective to achieve here: two types of visitors (users and bots) are coming to this site and we want to make all of them happy.

Ensure Effortless Experience for Humans

Ensuring an effortless and memorable experience by serving content up in the visitor’s language is a key factor for obvious reasons. We all are more likely to engage and interact, and achieve a site’s goals if we can read Web pages in our language, capisci?

As a user this means:

- I don’t want the site making me select my language if it already knows that detail (yes, it does) the first time I land there.

- I want the Web to remember my language preference when I come back.

Let me show you a couple of examples of what you should never do unless you hate your audience.

Bidz.com welcomes me in Spanish (¡Bienvenidos!) because they detect my browser language, but asks me to confirm Spanish as my preferred language as well as if I want to continue browsing in this language. Would you mind just showing content directly in Spanish and stop annoying me please?

If, for any reason, I decide I want to navigate in English, let me change that. Period. You could save tons of clicks and make a much better user experience by avoiding obvious questions, don’t you think?

The next example is taking language handling idiocy one step beyond. UGG boots, besides being a crime against good taste in shoes (unless you want to look like Mazinger Z), has a site that works this way

- You land on the United States (English) version of the site, no attempt to detect/offer other languages automatically.

- You click on country selector hoping you can jump to another language quickly but you are sent to a country selector page (very typical error), where you can select (finally) Spain supposing it will be in Spanish.

- Ta-da! Site shows the Spanish flag but it is completely in English. Good job.

Make It Easy for Search Engine Bots

Our second goal is to get our content in several languages perfectly indexed, avoiding the typical barriers like language selectors based on browser-side JavaScript or form submission, translations supported by cookies, or server-side sessions without any URL parameter handling language and such.

Describing all those troubles in detail would take a whole other post, but you know the consequences — all the money invested to make business international is wasted because search engines don’t rank anything they cannot index.

Language detection can be done at server side or browser side. I advise against the latter one for several reasons:

- Much better control with server redirections over the JavaScript browser ones.

- The probability to mess up Web statistics doing redirections at browser are much higher.

- No need to deal with users disabling JavaScript.

In any case, we need to come up with some Web programming logic capable of achieving both goals and apply it to the server-side script language your site is created upon: PHP, .NET, Ruby, Java, Python or any other. That is the takeaway.

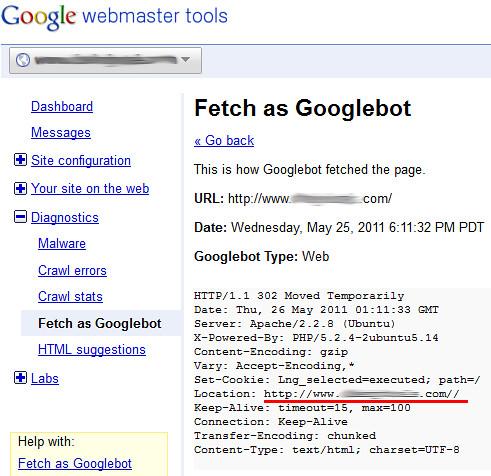

The user experience side of the coin is something you can test yourself. A nice way to test the bot side is using the “Fetch as Googlebot” at Google Webmaster Tools.

If your logic is not correct, and it’s not only redirecting users, but also bots after language detecting, you will see something like:

This shows Google bot is redirected to a non-existing “mysite.com/” and keeping the bot unable to index the site. In other words, a complete disaster.

Show All Content to Bots and the Right Language to Users

All Web programming languages have the required functions/variables to solve this issue, but let me use the more familiar (to me) PHP languages for the examples.

What can we get from somebody who is asking for a page in your site (HTTP request) before sending it to his/her browser? A couple of interesting things, the User Agent and the language of the browser:

$_SERVER[“HTTP_USER_AGENT”];

- Mozilla/5.0 (Windows NT 6.1; WOW64; rv:2.0.1) Gecko/20100101 Firefox/4.0.1

- Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/534.30 (KHTML, like Gecko) Chrome/12.0.742.91 Safari/534.30

- Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0)

- Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html)

$_SERVER[‘HTTP_ACCEPT_LANGUAGE’];

- es,en;q=0.7,en-us;q=0.3

- en-CA

- en-ES, es-US

First approach could be check the User Agent to verify if the visitor is human or a search engine bot, but this is a horrible idea:

- The list of bot’s user agents is longer than eternity: User-Agents.org, and you know what that means from a coding perspective, right?

- Technically, it is cloaking, and you could get a fantastic penalty and disappear overnight from the search engines. Yee-Haw!

So, reverse the logic; what do users/browsers have that bots don’t? Language. In an HTTP request from a bot, the language variable will return nothing. Voilà!

Yes, pretty straightforward, if the request has language, it is not a bot, it’s a human-like visitor and you know the browser language. Double win.

OK, OK, for accuracy, a couple of considerations:

- Detecting language does not mean the visitor is always human, but it’s a very high percentage.

- Browser language is not 100 percent accurate regarding language preference of the user, but again, there’s a good chance you’re going to guess.

Nothing is perfect, amigos, but this procedure can get you very close to 100 percent success on both your goals.

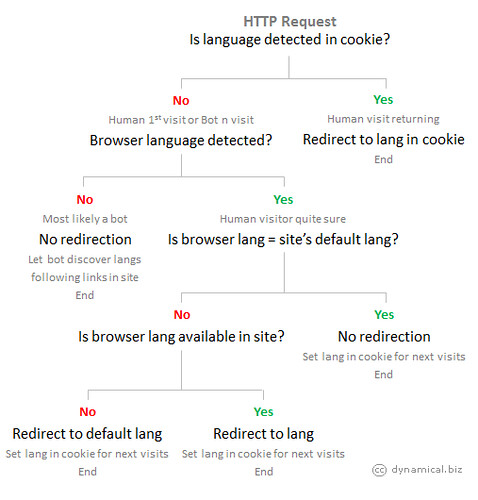

The Logic Behind the Scenes

After several tests, the following programming logic is behind the code; it works fine for me and improves conversions for multilanguage sites. It’s a combination of browser language detection at the server side and language preference stored in a cookie.

This ensures it’s transparent to search engine bots, they will find no barriers to crawl all languages available and users will enjoy a very comfortable experience. I hope you find these tips useful. Thoughts and experiences you would like to share are very welcome.

I want to thank Jessica Lee for inviting me to write for the Bruce Clay blog.

About Ani Lopez

|

Ani Lopez is SEO manager and Web analytics consultant at Cardinal Path. With a professional background that includes agencies in Europe and North America, Ani has lead SEO campaigns targeting several countries and languages for brands like Nokia, Mundia.com (an Ancestry.com company), Carrefour, Amadeus Travel and Solostocks, to name a few. You can follow Ani on Twitter @anilopez.

26,000+ professionals, marketers and SEOs read the Bruce Clay Blog

Subscribe now for free to get:

- Expert SEO insights from the "Father of SEO."

- Proven SEO strategies to optimize website performance.

- SEO advice to earn more website traffic, higher search ranking and increased revenue.

15 Replies to “Handling Multilingual Sites for Humans and Search Engines”

I was being too harsh because I travel a lot live in a different country languages and have a shared computer at home. There are times it would be more nice to be part of the majority are personally get a bit upset when websites are trying to guess and then force languages on me without asking and letting me confirm or deny.

Thanks for the great info we think that Google boats are easily fetched the data and content rather than the code level.

Indeed you make a good point regarding conversions, as ultimately that is the goal of every website and if something negatively affects that, it should be removed or not implemented.

As you said before, you cannot please everybody, should go for the majority and then it is just too bad for people like me.

Many times it is nice to be a different individual, but there are times it would be more nice to be part of the majority…

Thanks Paul

Right, it is the “dictatorship of the majority” especially when it is all about business & money.

You are very welcome and your disagreement too!

I understand your frustration having to deal with sites not handling preferences according to your situation.

The logic you suggest is pretty “polite” with user but:

– In cases I’ve worked on where the screen asking for preferences has been removed conversions increased.

– It is not going to solve the public usage issue

A complete different, and interesting story, would be UX best practices to make easy for users to guess how to change language in a site not in their language. I encourage you to make a study about.

What I don’t like about flags is in many cases a flag does not represent a language, Canada’s flag does not mean only English, it is French too.

Display “Español” and not “Spanish” I agree 100% with you.

Yes, so many details could compliment the article but unfortunately I have not all the time I would like to do it. Again, write about it, take it to the next level!

Alienate people who travel is what some border police makes sometimes when your skin is not pale white :(

Hi Ani,

thanks for the follow up.

Maybe it is just me and I was being too harsh, but because I travel a lot, live in a different language country and have a shared computer at home, I personally get a bit upset when websites are trying to guess and then force languages on me without asking and letting me confirm or deny. Extremely frustrating.

I do understand your basic logic and indeed in some cases it may be useful to use some sort of auto-guess language feature, but IMHO it definitely needs to be done differently then described here as some very important details were left out.

I would suggest the following.

– On first visit, detect browser language by means of your suggestion, show a screen stating that the website guessed “insert language”, with the following options:

— Yes, make default and never ask again

— Yes, but don’t make default and ask on repeat visits

— No, let them choose the correct language and ask for default language yes or no

Now comes, for me anyway, another very important part. You talked about the impossibility of personalization for shared computers, which is of course correct. But to make websites unusable for people on public/shared computers is a bit too much I would say, especially if it can be avoided.

I find that many websites, including MSN/Hotmail as one of the big ones, use TEXT for people to select a different language (and to top it off they have it displayed somewhere near the bottom right corner). This is a very, very big no no, as that makes sure that people who cannot read the language on the website, cannot change it into a language they can read, because the text would be something like “Verander Uw Taal” (Change Your Language in Dutch) and most people have no idea what that means and are therefore unable to change the language.

The far better way, IMHO, is to show either flags of the countries, including the flag of the country of the currently displayed language or list all languages in the header directly but then of course with the languages already translated into their alphabet, i.e. display “Español” and not “Spanish”. Many websites got that wrong, such as the Bidz website and it needs to fit into the design of the website. If it doesn’t fit into the design, then don’t implement the method.

Without those additions, the suggested method leaves out many important parts and is just not a very user-friendly way of doing things.

Indeed “never, ever” is not fitting with SEO in general, but I do think it is correct if talking about the specifics on how to implement the suggested method, as there are far better ways that don’t alienate people who travel, share computers or have multi-cultural relationships and still have all the advantages of the suggested method.

To further specify the problem, ALL people who use public and/or shared computers are effected in a very bad way, if it is done as suggested here.

To name a few:

– Internet cafe’s

– University/School computers

– Library computers

– Hotel computers

– Coffee shop computers

– Multi-cultural couples who share the same computer at home

I think it is hard to find any website on a subject that never receives any visitors using the above mentioned places. Meaning that the suggested method is at least extremely bad user experience (or sometimes even makes it impossible to use) for some of your readers.

Personalization does not match public usage computers and nobody expects to happen that way when you are in an internet cafe, library, hotel and such.

Again, most likely represent a small percentage of visitors in any international SEO project.

By the way, “NEVER, EVER” are words not fitting SEO, even less in capital letters. I guess you skipped first sentence in article.

I have to disagree completely with websites auto selecting languages.

To me it is very frustrating and should never, ever be done automatically.

Take my situation. I live in Thailand with my Thai wife and we both use the same computer and the computer was installed with Thai language.

What this means, is that whenever I, non-Thai reading, go to a website which auto selects language, I see Thai jibberish letters which don’t make any sense at all to me.

To make things even worse, is that there IS an option to select a different language on most websites, but of course it is in Thai, which I cannot read.

This happens every single time with for example MSN/Hotmail. My wife logs in with her account, which is set to Thai. When she is done, she logs out. When I go to MSN/Hotmail, it has remembered the Thai language and the “change language” section is in somewhere on the page in THAI. So that means that effectively I cannot use MSN/Hotmail anymore.

Of course now I know where to click, because she told me, but otherwise it would have been impossible for me.

Same goes for Google. Whenever she has logged into her Thai account, Google assumes that it should always display Thai.

NEVER, EVER auto select languages and keep them in memory to server next time, NEVER. It is just very bad behaviour of websites. IF, and a very big if, you want to have it set to memory, let users specify that. For example hotels.com does a good job at that, but almost every other website is just not friendly to people who use different languages on the same computer.

Of course I understand that my situation is a bit specific, but how many internet cafe’s do you know?

Since many different nationalities use the same computer on internet cafe’s, they have the exact same problem as me.

So PLEASE, never ever do auto-selecting language without user input and never ever commit the selected language to default without specific confirmation.

Please, it will make the web a much better place for anybody who uses internet cafe’s and are located in foreign countries with different nationalities.

Dear Paul, I’m very sorry to tell you have not understood the logic presented in article to handle several languages.

First of all, no site has all the languages of the world so there is always going to be a portion of visitors not finding their language, like Thai in your case, and tat was something I said there.

Following the example in article,

– mysite.com English, default language

– mysite.com/fr/ for French

– mysite.com/es/ for Spanish

– mysite.com/ru/ for Russian

There is no Thai so I’ll keep you in the DEFAULT language, (English in this case) and you, as user, will decide if you want to continue in English or switch to any other of the available.

The default language tries to target my larger audience. If I’m not interested in Thailand as a potential market for my business I won’t offer this language for obvious reasons.

The other way round. Let’s imagine my site offers Thai because this country is part of my business.

You, non Thai speaker but living in Thailand, using a browser in that language, would be redirected to the Thai section of the site.

You will be disappointed but once you select English next time coming back you won’t have to select again because I know your preferences.

The rest of visitors from Thailand speaking Thai won’t have to do anything and the user experience will be perfect for them.

They represent a high percentage of my users in this country, not people in your situation. Knowing no solution is perfect I want to make happy the vast majority, not the exceptions.

hotels.com does it different and also makes some wrong assumptions:

They geotarget location, showing the Canadian flag to me because this is were I´m now

they also show a message “Ver esta página en Español” (see this page in Spanish) because my default browser’s language is Spanish

I change to Spanish and what happens next time I visit the site?

They show content in Spanish because they store language preference in cookie

They show the Mexican flag assuming I’m in Mexico but not, I’m still in Canada = Fail!

Geotarget is adding another variable to equation but:

– Not many sites want to pay a third party to obtain that service

– Again, it is not 100% magic ¿What if they show content in Spanish because I’m in Spain but I’m a French guy on holidays in Barcelona?

The timing of this article couldn’t be better. Thanks for the great info Ani…especially the logic for determining what language to show. Great stuff!

Really nice post Ani.

I had a little confusion about getting a new domain for a different language, creating a subdomain or just a dir. Your post cleared my doubts.

LEAVE A REPLY