Search Engine Optimization Track: Build It Better: Site Architecture For The Advanced SEO

Nom nom, lunch time. I got more popcorn from the Yahoo booth. Don’t judge me. You don’t know me. Anyway, in the interests of saving my hands, I’ll keep this short. Here’s the line up.

|

Moderator: Vanessa Fox, Contributing Editor, Search Engine Land

Speakers:

Adam Audette, CEO & President, AudetteMedia

Maile Ohye, Senior Developer Programs Engineer, Google Inc.

Lori Ulloa, Sr. Digital Marketing Specialist, R2integrated

Brian Ussery, Director of SEO Technology, Search Discovery Inc.

Vanessa says we’re going to talk about advanced site architecture issues in this session. Hurrays!

Maile Ohye is our first speaker. She gives out her credentials which you should know by now.

Agenda: execute the fundamentals as they relate to understand craw/index/ranking feedback loop

- URL structure

- Respose codes

- standard encoding

Prioritize accordingly

- speed/performance

- long-tail content

- duplicate content

- video sitemaps

- microformats

For example: Googlestore.com

158 products, but 380,000 URLs identified by Googlebot because of category filters, price filters, links, etc.

How do you fix it?

- Maintain a consistent URL structure

- Protocol and domain case insensitive

- http://www.example.com

- HTTP://WWW.EXAMPLE.COM

- Reduces duplication

- Facilitates more accurate indexing

- Simplified robots.txt configuration

- disallow: /ipod != disallow: /iPod

Respond appropriately: use 301s and rel=”canonical”

Those are crawled less frequently — also 404 and 410. 400s are also removes from the index.

500s are treated as a transient error. They don’t remove it from the index, and they’ll try again in the future. You can be useful to your users with error code text that explains the problem.

Standard vs maverick encoding: Follow standards because Google looks for those and they can’t quite figure out other ways. Using key value pairs, it reduces maintenance for webmasters. You can also tell webmaster tools which parameters to ignore.

Feedback loop: Prioritization

- Indexing priorities: what will users find relevant

- URLs with updated content

- New URLs with probability of unique/important information

- Sitemap information

- Ability (eg load capacity, uptime) of site’s Web server to serve content

Increase Googlebot visits by:

- strengthening indexing signals

- uniqueness and freshness

- how well the page is linked from in your site and externally

- use proper response codes

- be interpretable with standard encodings

- serve content reliably

- prevent the crawling of unnecessary content

Optimize performance

Improve long-tail content

- Create unique content or quality user-generated content

- Keep information fresh

- Link internally and externally

Reduce duplicate content

Include Microformats/RDFa

- Enhance results with Rich Snippets

- Ability to include reviews, recipes, people, events

- Other formats exist, possible future adoption (Matt emphasizes this as well.)

Create Video Sitemaps/mRSS feed

- Improve Video/Universal Search presence

- Include various video filetypes (.swf, .mpg, .mpeg, .mp4, .mov, .wmv, .asf…etc)

Adam Audette steps up to the podium. He pimps Vanessa’s book. I really need to pick that up. I’ve heard it’s good.

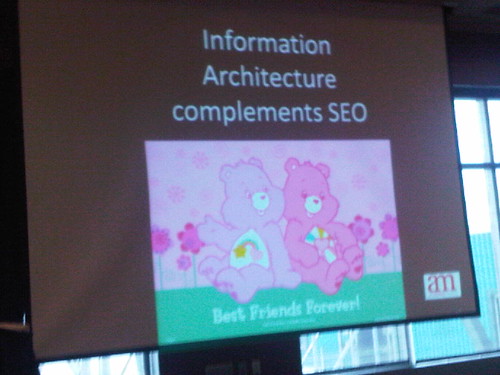

It’s all about user experience. First you have to make the best user experience. Then leverage for maximum SEO. Those things are totally complementary.

Y’all, he just flashed through slides on Dumb and Dumber, Knightrider, Tenacious D, Bosom Buddies, unicorns, rainbows and Carebears…

I have no words.

He highlights Amazon’s user experience, pointing out the lefthand column, the breadcrumbs, etc.

You need to evolve your navigations. It’s not just about throwing more links into your nav. Also make use of your link relationships.

Know your internal link profile. You need a robust crawler for that.

Content is more important than ever. Semantic closeness is important.

Use faceted navigation — that’s great for users, however it’s a pain for bots.

- Rewrite facets to pretty URLs based on priority

- Place faceted experience in a folder (more control)

- Append “overhead” attributes to the pretty URLs; rel=canonical back

Pagination

Make your “view all” page, the canonical version and the default browse. Roll up pages with rel=canonical to that view all page.

Brian Ussery follows Adam’s pop culture extravaganza. He’s focusing images.

User intent: “How to tie a tye” — first result: text not so good, images very good.

Bing SERPs – give you video and images

Yahoo – no images or video

Google – images and videos

The key is to understand the engines. Engines try to align photo SERPs with queries that are going to align with user intent.

The size of your monitor will determine how many images will show up in the Universal SERPs.

Understand users – they can review a lot of images at once so they take in a lot of images in general.

Engines don’t access images directly.

(I’m having to skip a bunch here. He’s got so much good information but my hands can’t take it. Here have a checklist!)

Remember that images in Flash aren’t accessible to engines, sometimes JavaScript isn’t viewable either. Provide dimensions.

Provide Creative Commons license in your RDFa file.

Lori Ulloa is our last speaker. [Dana is taking over the notes from here out. —Susan]

There’s Help! You might just not have structural issues, but it might be a lot more (shows funny pictures).

Just when you think that Web designers, developers and programmers know SEO, many of them do not.

Ask Questions:

- Is site on a CMS, If so, what one?

- Server

- Language or platform?

- Are there developers/programmers readily available?

Identify issues:

Pages Indexed?

Talked about how important, (sort of a duh moment)

- “site: query” (But, Lori, Google is rolling out no #s for site: queries)

Talk to Developer

- If you see stuff that doesn’t look fright, write down your findings.

How to Test Canonical

- Why is this important?

- Look to see if your site shows with www. (Troubling, so far this is not advanced material.)

- Tells us to sign up for Google Webmaster Tools & methods to correct canonical issues.

Referring Links

- Shows how to check to see your site link.

(Stopped taking notes, frustrated. We know how to go to Yahoo! Site Explorer to look for links.) - Talk to the Developer about the origin of the links on your site. If you have no links at all, write content and get links. (Sorry, but BIG DUH!)

XML Sitemap

- How to test for an XML sitemap?

- Put URL (site.com/sitemap.xml) into browser.

- Look at priorities.

- 1.0 for home

- 0.8 top level nav

- 0.5-0.3 for lower nav

- 0 for content you don’t want prioritized or put in robots.txt

Site Speed Issues

How to test? Get Firefox addon. Download Firebug and YSlow (Yahoo!) and Page Speed (Google).

(I’m continuing to wonder why I’m taking notes… Remember, Lori, this is SMX Advanced… You don’t need to tell us to make a list and research each list and present possible solutions to developer. Sorry, it is not in my nature to “BOO!” a presentation, but this should be presented as SEO101 or to a Chamber of Commerce.)

“Work with your developer to keep you feeling strong?” THAT was not a strong close.

Q & A

Faced Navigation

Maile: Google still does not want to see search results in search results. She then refers to Adam’s faceted navigation. Google calls it “additive filters.” Nike, example size=5. Creates 380,000 URLs on a Google Store. Team has talked a lot about this. How do you determine what is the best to crawl? Google is brainstorming, discussion about handling URLs after 2 filters. Prevents them from adding more filters. If too many filters, robots.txt it out.

Google’s solutions relies on site using standard encodings and key value pairs. Solution – looking to test it and see how it works.

Adam said they see that faceted navigation has strange characters in URLs, not proper encoding. Kinks have not been worked out yet.

Lightning Round

Q: If you have thousands of pages on your site, is it still okay to use rel=”canoncial” ?

A. Yes. If pages have slight variations, should you rel or try to rank. No violation to use rel=canonical.

Q. Speed? Are you measuring client side or time server take to bring back page?

A. Transfer time is the time looking to calculate. It’s actual user data.

Q. Dublin Core – will it be supported?

A. Richard Baxter @richardbaxter walked by, got distracted.

Q. Something about using text outdent.

A. Vanessa talked about header image, putting it off page -9999. Don’t do it!

Google is working on some best practices. Maile says text indent is not safe.

Q. Does pubsubhubbub get your site ranked faster?

A. Right now, it’s not totally incorporated into pipeline. pubsubhubbub has only been around a couple months.

Q. Is there a cap on URLs on how many can be put into the index for one site?

A. NO!

Q. Is sitemap.xml priority important?

A. Don’t spend time on priority numbers. Do put 1.0 for home page.

OK… that’s it. Susan walks up, dragging her feet and fingers… We agreed our brains are officially “unoptimized” at this point.

26,000+ professionals, marketers and SEOs read the Bruce Clay Blog

Subscribe now for free to get:

- Expert SEO insights from the "Father of SEO."

- Proven SEO strategies to optimize website performance.

- SEO advice to earn more website traffic, higher search ranking and increased revenue.

9 Replies to “Search Engine Optimization Track: Build It Better: Site Architecture For The Advanced SEO”

I completely disagree with Susan and Dana about Lori’s presentation. Susan, I’m continuing to wonder why you call yourself an editor. Remember, Susan, SMX Advanced recognized the value in Lori’s presentation which is why they placed her on the panel. I thought her presentation was clear, concise, and useful. Having an “advanced” presentation means nothing if attendees can’t retain or use the information. Also, you shouldn’t knock a presenter’s close with a blog that opens “nom, nom, lunch time.” It’s wonder that anyone takes you seriously.

Don,

Thanks for stopping by. I believe Lori’s presentation was good, useful and informative. I don’t believe it was advanced at the same level that Adam’s was. I don’t think that’s because Lori herself isn’t capable of it — I have full confidence that Lori’s as much of an expert as anyone else sitting on the panel — I just think she made a choice about the level she was speaking to that was different than my expectation of the conference. I’m glad you found it useful and I hope that you told the conference so when you reviewed the session. I know they appreciate the feedback. As do I for that matter.

As for “nom, nom, lunch time”, guilty as charged. We like to be a little casual and fun around here. It’s not everyone’s cup of tea.

Hi ladies,

Thanks for the recap. I didn’t make it to this session, but there’s a lot of great stuff here (Adam Audette on site structure and audits are always a must at any conference). My comment for you – have you seen Maile’s or Brian’s presentations at all on the SMX Advanced site? I noticed they were missing from the list. If you have them let me know, I’d be interested in seeing them. Thanks!

Lori, I made the comments about your presentation, and people sitting around me were also commenting. Your presentation and approach was extremely well done but for another audience, not SMX Advanced. Advanced search marketers don’t need to be told to how to look for incoming links or to write new content. We do not spend time breaking down priorities on XML sitemaps. You speak well, and it’s like writing, we need to direct our content for the audience. Susan is correct that anyone can buy a ticket; however, most in the industry are coming here expecting to get something they don’t get at other conferences.

Susan, you did an ABSOLUTELY fantastic job capturing this session, which was fast. AND you got pictures? I’m extremely impressed! Nice job!

Just to mention, I spoke with many people there who found the info helpful and did not know it. There are many levels of “advanced.” Some people understand keyword research, titles and descriptions only but consider themselves advanced. Since I know the other panelists would be more advanced, I took a step back. If a marketer (my intended audience) can’t identify these issues, moving forward will be more difficult for them. If you considered it a “duh” moment, I apologize for wasting your time. Others thanked me for taking a step back.

Lori,

Thanks for stopping by the blog! I agree with you that there were a lot of levels at the conference and for those people, your presentation was valuable. But, and I think you’re agreeing here, your presentation was more basic than the “advanced” level of the conference implies. I actually think this is a larger problem with the conference. There’s no barrier to entry–any level can buy a ticket–so speakers like yourself have to make a choice on whether or not they’re going to address that (and thus end up less advanced) or ignore it and lose part of the audience.

I think your presentation was very good and informative for a more basic audience, it was only that the expectation of “Advanced” content made it seem out of place.

–Susan

Thanks for the notes Susan, got some good takeaways here. Just wanted to drop a comment and say thanks for the hard wrok!

LEAVE A REPLY