3 Reasons to Always Have Structured URLs

| Estimated reading time: 6 minutes Audience: SEOs Top takeaways: • The concept of flat site architecture is often misunderstood; structured URLs are the way to go. • Structured URLs: 1) Help semantics 2) Offer the best indexing control and 3) Give better SEO traffic analysis. • Ecommerce sites require special attention for a product that exists in several categories at a time. |

Since the flat site architecture concept appeared on the SEO horizon and gained some traction around 2010, many SEO consultants got it wrong. The flat site architecture concept is related to the click distance between pages in a site, and how relevancy is distributed according to internal links structure — yet has nothing to do with URLs.

The main misunderstanding was, and unfortunately still is, that you have to get rid of directories in URL structures. Although it is widely agreed that you may want to keep URLs short and locate keywords close to the root or left part of the URL, there are many reasons why you should keep a certain structure of folders or directories there. This is what I’m going to explain in this post.

After the flat site architecture concept was introduced, many SEO consultants freaked out and changed their structures from something like:

- myautoparts.com/engine-parts/cooling/audi-a6-quattro-engine-timing-belt-kit.html

To:

- myautoparts.com/cooling-audi-a6-quattro-engine-timing-belt-kit.html

Or even worse:

- myautoparts.com/audi-a6-quattro-engine-timing-belt-kit.html

Another example:

- mycorporatesite.com/blog/women-accessories-design/top-fashion-trends-for-2012.html

To:

- mycorporatesite.com/top-fashion-trends-for-2012.html

A little warning: If, for any reason, you do decide to remove directories from content page URLs, never get rid of category pages. Following the previous examples, do not destroy URLs/pages like:

- myautoparts.com/engine-parts/cooling/

Or:

- mycorporatesite.com/blog/women-accessories-design/

… Because they represent a great space/opportunity for a better content strategy, keyword allocation and internal link building. This is SEO 101, but just in case.

1. Structured URLs Help Semantics

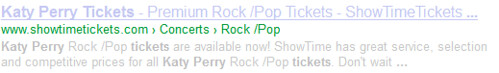

You already know that from a search engine perspective, a site is not a big bag of unordered words. Search engines try to make sense of text by analyzing how those texts are organized in main topic and subtopics. URLs are the ID of every page, so the more they reveal about how the content is structured, the better. An example of how relevant directories at URL structures can be is the breadcrumbs you see frequently on search engine results pages (SERPs):

It is true that the typical HTML breadcrumbs in a page can trigger them to appear, but I’ve seen many cases where the only reason for that was a clear, organized URL structure (showtimetickets.com/concerts/rock-pop/) with no HTML breadcrumbs at all.

Tip: Add specific semantic markup to breadcrumbs in combination with a coherent URL structure and your chances to get breadcrumbs in the SERPs skyrocket.

Example:

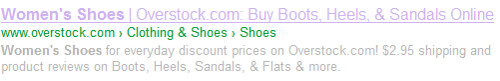

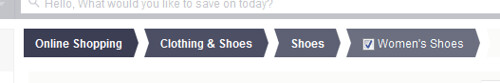

Take the URL: http://www.overstock.com/Clothing-Shoes/Womens-Shoes/692/cat.html. Have a look at the HTML code of page breadcrumbs to see the semantic markup:

You don’t want to miss that extra click-through rate, right?

See here for a look at how Google handles breadcrumb URLs on mobile.

2. Exhaustive Indexation Control

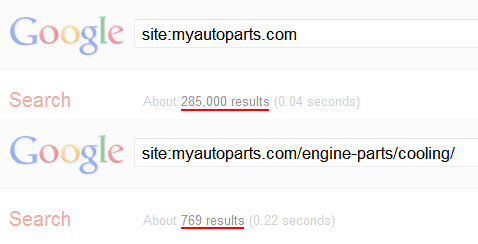

A common task of SEOs is checking how many of the pages in their sites are indexed by search engines. It should be simple: list of URLs in a site, list of URLs indexed at search engines and compare. Not so, especially at large sites. Trying to make an exhaustive inventory of indexed URLs to find out the non-indexed ones can be a real pain. Even worse, Google’s site: command is not going to show more than 1,000 URLs.

The no-brainer trick here is to use site: command for sections of the site URL delimited by directories. Once you get a number of indexed pages smaller than 1,000, it is not hard to list them all out of the SERPs by using the OutWit Hub Firefox plugin, for example.

Of course, it takes time to collect all URLs indexed by section, but this is one of the reasons they pay us SEOs, right?

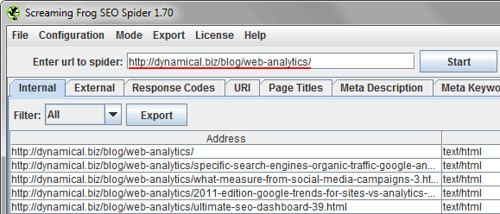

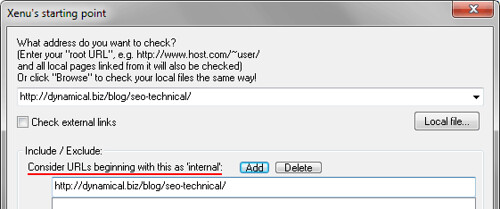

The second step is comparing the indexed URLs with the ones available at the site. Your XML Sitemap should work like a charm and list absolutely all URLs; unfortunately, this doesn’t happen frequently. Use some of those tools that simulate a bot crawling your site like Screaming Frog Spider Tool or Xenu Link Sleuth. Both are able to crawl and list all URLs below a certain directory, but Screaming Frog does that by default. If using Xenu, it’s something you have to configure before a crawl job.

In any case, you can probably see how handy structured URLs can be for the finest indexing control.

3. Better SEO Traffic Analysis

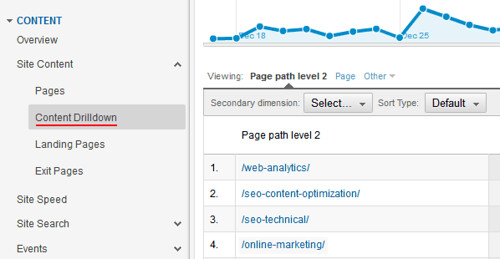

Measuring SEO performance is a remarkable chunk of time in any project. It was always important to me, but after the Google Panda algorithm update rocked our world, it became essential to analyze organic traffic by sections of the site.

Content drilldown reports are nothing without the proper URL structure.

Reports based on pages are way useful and easier to manage when we can filter groups of URLs to break down by sections and, again, having directories at URLs makes regular expressions easy as pie; this is a real advantage. Otherwise, you would end up having the mother of all regex, and the uncertainty of leaving part of them out of the bucket to analyze.

If your URLs have not been carefully crafted, traffic analysis is going to be the worst of your nightmares (believe me, I’ve been through that) unless you make perfect use of virtual pageviews. The CMS of your site has extra fields in the database to handle readable names, and the code logic to populate those virtual pageviews; unlikely to happen and an expensive solution.

For example, the URL:

- myautoparts.com/audi-a6-quattro-engine-belt-kit.html (no directories here)

- VPv _gaq.push([‘_trackPageview’, ‘/engine-parts/cooling/audi-a6-quattro-engine-belt-kit.html’] (Directories inserted by code logic at page tracking level with category names coming from database to cover the lack or real categories at URL level)

An E-Commerce Scenario

It is quite common at e-commerce sites to have URLs reflecting directory categories and subcategories of products, for example: /engine-parts/cooling/ — but when it comes down to product level, they are all allocated under something like /products/audi-a6-quattro-engine-belt-kit.html — completely out of their natural allocation under corresponding categorized URLs.

I usually dislike this solution, but it is handy to solve duplicate content issues where one product belongs to several categories at the same time. If you must do this, use something better to describe what you sell other than just “products” — it could be /auto-parts/. And at least use one directory for all products. Do not place them directly under root domain.

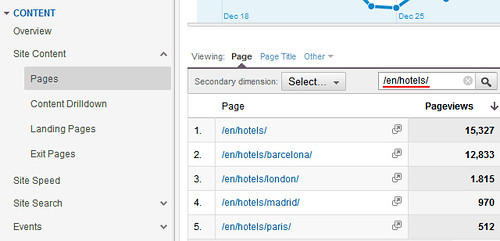

One of the advantages is you can easily guess at-a-glance how much traffic product pages get. I always include a chart in SEO e-commerce dashboards that shows:

- Total visits from organic traffic

- Visits that view product pages

- Visits that added products to shopping cart

- Visits that completed checkout process

This gives me a very nice perspective of how my SEO performs while converting visitors into clients.

In sum, don’t fool yourself with misconceptions. Before making any decision in your SEO, think about the pros and cons. We have reviewed three primary reasons why you should do a clever use of directories in URLs for a better SEO. Those reasons are:

- They reinforce the search engines semantic understanding of your content.

- Indexation control is easier to manage while having directories.

- Full profit of analysis capabilities thus better insights from your data.

Convinced yet?

26,000+ professionals, marketers and SEOs read the Bruce Clay Blog

Subscribe now for free to get:

- Expert SEO insights from the "Father of SEO."

- Proven SEO strategies to optimize website performance.

- SEO advice to earn more website traffic, higher search ranking and increased revenue.

20 Replies to “3 Reasons to Always Have Structured URLs”

ABSOLUTELY….a spam URL dropper here….James – you should be ashamed of yourself!! You drop a link here where us SEO types hang out…and what – you thought we wouldn’t notice?

…sigh….

AFAIC…I’ve added that URL & you to my “bozo-filter”

Idiots, eh!

:-(

Jim

PS Ani…ignore the noise…the post carried plenty of signal for those of us who work at our craft, eh!

Hi James, pretty spammy your comment, isn’t it?

low-priced gigs on SEO? ok, you get what you pay for.

Great article, thanks! Correct URL structure really helps with SEO for new website owners, but there are also other ways or techniques to do SEO work. If you just launched you site you can consider sites like http://www.gigs20.com for low-priced gigs on SEO. It really works and you don’t have to spend a fortune…

Great article Ani! Thank you very much!

I just wanted to add that you can add subdirectories to the webmasters tools of search engines.

Thanks again

Welcome John

Oh, I had no idea there was another Vancouver, I’m pretty new to this side of the world, my apologies. Yes, having two cities with the same name can be a challenge (that I never faced by the way).

Hi Ani,

perhaps part of my challenge is that in the 1980’s I was proficient with Comshare’s “System W” which was a multi-dimensional financial modelling program. It solved such data views easily!

Thanks again for your tips. (Another big challenge I have with G is that I live in the little Vancouver, next to the much bigger Portland. I want some of the traffic that is interested in “Portland area real estate” but not from the even larger Vancouver Canada — it’s a tough puzzle sometimes!)

@Ani…

Ahhh….great piece here lad! nice to see us “Canucks” doing well in the SEO world…and dropping by this BC blog and seeing your byline was a treat this am!

And yup, I agree – one bread-crumb trail is all that’s needed….

never ever even idavertantly confuse the G algo….

:-)

Jim

Hi John, I’m a vancouverite too!

No, it makes not sense to have two on-page bread crumb trails. This would just add confusion to the search engines and visitors.

This, as far as I see, is the typical situation where a product/service can be placed under one or more categories, something I described at the end of article “An E-Commerce Scenario”

There are some other ways to solve. Probably the charts you can find here will help you to visualize the solution http://dynamical.biz/blog/seo-content-optimization/web-structure-duplicate-content-canonical-12.html

Cheers

@mark Regarding “it also stops pages from being memorable” – Do you really think someone will memorize your deep page full URL? And why would? There is bookmark and favorites today for that.

Hello Ani,

Thanks for your article. I work most of the time as a real estate broker in Vancouver WA, with my own website. The traditional drill-down is to pick the city you wanted, then neighborhood, then style of home (these are “Lifestyle” shoppers).

Now, a very popular category item that draws traffic is Foreclosures / Bank Owned Homes. Once in this category, that person may go from city to city to see where the best deal is (these are “Bargain” shoppers, as a great deal is more important to them).

Is it reasonable or make sense to have two on-page bread crumb trails; one for Lifestyle shoppers, and another for Bargain shoppers?

Hi Rick

I appreciate your comments. I don’t think SEO is a “like or dislike” game, that’s why I try to bring reasons to the discussion.

Regarding the hyphenating, although search engines do a pretty good job reading text, it makes complete sense if we make it easier for them and users to understand the information URLs handle including hyphens as word separators. Otherwise it could be misunderstood if several words together could lead them to unexpected meanings.

Matt Cutts did an old video on that specific topic but it is common sense anyway.

In defense of Mark… I-like-the-root-as-well. HOWEVER Ani’s approach makes sense for having a well ordered structure for crawling. Makes for great house keeping too. I think I will give this a whirl for my next large static site client.

Any thoughts as to hypenating the folders themselves to make them more descriptive? Example: doctors/cancer-treatment/ or

doctors/cancertreatment/

Hi Marc,

“google doesn’t recognize folders as structural” ok, would you mind to explain why not? oh wait you already gave a reason “it also stops pages from being memorable” is being memorable a new ranking factor?

“if you have a page that sites in 2 categories…” that’s a duplicated content issue, not a semantic one.

“putting pages in the root for me is always the best option” no problem, I really would like to see how you face data analysis in your daily SEO work regarding this point. That could be a great article we all would like to read.

but seo wise, google doesnt recognise folders as structural. therefore for seo it doesnt make sense to build them in. it also stops pages from being memorable and aside from this if you have a page that sites in 2 categories the last thing you want to do is pigeon-hole it into one so putting pages in the root for me is always the best option.

Great post Ani! Another advantage for proper URL structure is reporting the $, what most top executives always want to hear about. Getting the dollar value of a single director, drilled down to sub-categories/product pages is like digging up a gold mine :)

Ani – Thanks for shedding some light on this Ani. I still have way too many conversations with people who defend the site.com/this-is-the-best-way-to-name-all-your-urls model… despite common sense, at least for me, would favour structure.

LEAVE A REPLY