Nowhere Left to Hide: Blocking Content from Search Engine Spiders

TL;DR

- If you’re considering excluding content from search engines, first make sure you’re doing it for the right reasons.

- Don’t make the mistake of assuming you can hide content in a language or format the bots won’t comprehend; that’s a short-sighted strategy. Be up front with them by using the robots.txt file or Meta Robots tag.

- Don’t forget that just because you’re using the recommended methods to block content you’re safe. Understand how blocking content will make your site appear to the bots.

When and How to Exclude Content from a Search Engine Index

A major facet of SEO is convincing search engines that your website is reputable and provides real value to searchers. And for search engines to determine the value and relevance of your content, they have to put themselves in the shoes of a user.

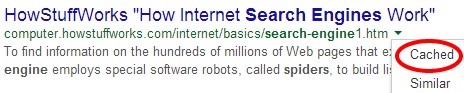

Now, the software that looks at your site has certain limitations which SEOs have traditionally exploited to keep certain resources hidden from the search engines. The bots continue to develop, however, and are continuously getting more sophisticated in their efforts to see your web page like a human user would on a browser. It’s time to re-examine the content on your site that’s unavailable to search engine bots, as well as the reasons why it’s unavailable. There are still limitations in the bots and webmasters have legitimate reasons for blocking or externalizing certain pieces of content. Since the search engines are looking for sites that give quality content to users, let the user experience guide your projects and the rest will fall into place.

Why Block Content at All?

- Private content. Getting pages indexed means that they are available to show up in search results, and are therefore visible to the public. If you have private pages (customers’ account information, contact information for individuals, etc.) you want to keep them out of the index. (Some whois-type sites display registrant information in JavaScript to stop scraper bots from stealing personal info.)

- Duplicated content. Whether snippets of text (trademark information, slogans or descriptions) or entire pages (e.g., custom search results within your site), if you have content that shows up on several URLs on your site, search engine spiders might see that as low-quality. You can use one of the available options to block those pages (or individual resources on a page) from being indexed. You can keep them visible to users but blocked from search results, which won’t hurt your rankings for the content you do want showing up in search.

- Content from other sources. Content, like ads, which are generated by third-party sources and duplicated several places throughout the web, aren’t part of a page’s primary content. If that ad content is duplicated many times throughout the web, a webmaster may want to keep ads from being viewed as part of the page.

That Takes Care of Why, How About How?

I’m so glad you asked. One method that’s been used to keep content out of the index is to load the content from a blocked external source using a language that bots can’t parse or execute; it’s like when you spell out words to another adult because you don’t want the toddler in the room to know what you’re talking about. The problem is, the toddler in this situation is getting smarter. For a long time, if you wanted to hide something from the search engines, you could use JavaScript to load that content, meaning users get it, bots don’t.

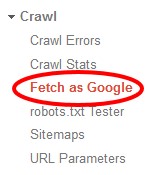

But Google is not being at all coy about their desire to parse JavaScript with their bots. And they’re beginning to do it; the Fetch as Google tool in Webmaster Tools allows you to see individual pages as Google’s bots see them.

If you’re using JavaScript to block content on your site, you should check some pages in this tool; chances are, Google sees it.

Keep in mind, however, that just because Google can render content in JavaScript doesn’t mean that content is being cached. The “Fetch and Render” tool shows you what the bot can see; to find out what is being indexed you should still check the cached version of the page.

There are plenty of other methods for externalizing content that people discuss: iframes, AJAX, jQuery. But as far back as 2012, experiments were showing that Google could crawl links placed in iframes; so there goes that technique. In fact, the days of speaking a language that bots couldn’t understand are nearing an end.

But what if you politely ask the bots to avoid looking at certain things? Blocking or disallowing elements in your robots.txt or a Meta Robots tag is the only certain way (short of password-protecting server directories) of keeping elements or pages from being indexed.

John Mueller recently commented that content generated with AJAX/JSON feeds would be “invisible to [Google] if you disallowed crawling of your JavaScript.” He further goes on to clarify that simply blocking CSS or JavaScript will not necessarily hurt your ranking: “There’s definitely no simple ‘CSS or JavaScript is disallowed from crawling, therefore the quality algorithms view the site negatively’ relationship.” So the best way to keep content out of the index is simply asking the search engines not to index your content. This can be individual URLs, directories, or external files.

This, then, brings us back to the beginning: why. Before deciding to block any of your content, make sure you know why you’re doing it, as well as the risks. First of all, blocking your CSS or JavaScript files (especially ones that contribute substantially to your site’s layout) is risky; it can, among other things, prevent search engines from seeing if your pages are optimized for mobile. Not only that, but after the rollout of Panda 4.0, some sites that got hit hard were able to rebound by unblocking their CSS and JavaScript which would indicate that they were specifically targeted by Google’s algorithm for blocking these elements from bots.

One more risk that you run when blocking content: search engine spiders may not be able to see what is being blocked, but they know that something is being blocked, so they may be forced to make assumptions about what that content is. They know that ads, for instance, are often hidden in iframes or even CSS; so if you have too much blocked content near the top of a page, you run the risk of getting hit by the “Top Heavy” Page Layout Algorithm. Any webmasters reading this who are considering using iframes should strongly consider consulting with a reputable SEO first. (Insert shameless BCI promo here.)

26,000+ professionals, marketers and SEOs read the Bruce Clay Blog

Subscribe now for free to get:

- Expert SEO insights from the "Father of SEO."

- Proven SEO strategies to optimize website performance.

- SEO advice to earn more website traffic, higher search ranking and increased revenue.

LEAVE A REPLY