What Is a Google Penalty and How Do I Avoid It?

There are two forms of “penalties” that SEOs think about when they refer to a Google penalty.

One is a manual action penalty, which is site-specific and intentionally applied. The other type of “penalty” is really more of a consequence. It happens when a site loses rankings as a result of the Google algorithm.

Google reports that manual actions occur less and less frequently as the algorithm gets smarter. For example, Google applied 2.9 million manual actions in 2020, which is far fewer than the 4 million sent in 2018 and the 6 million in 2017.

Since algorithmic hits account for ranking drops more and more, calling them search engine penalties sounds fair to me.

Below I’ll give more details about both types and why penalties exist. Feel free to jump ahead:

- How webmaster guidelines and penalties work

- What is a Google manual action penalty

- What is an algorithmic penalty

- FAQ: How can websites avoid Google penalties and improve their search engine rankings?

How Webmaster Guidelines and Penalties Work

Google’s “Webmaster Guidelines” help website publishers understand what can cause a penalty or poor rankings.

These guidelines are centered on quality. A quality website will provide a good user experience. Websites that offer a good user experience have a better chance of competing in the search results.

Websites that create a bad user experience and violate Google’s guidelines can either receive a manual penalty from Google, or just not rank — or both.

The webmaster guidelines are driven by basic quality principles that include the following:

- Make pages primarily for users, not for search engines.

- Don’t deceive your users.

- Avoid tricks intended to improve search engine rankings. A good rule of thumb is whether you’d feel comfortable explaining what you’ve done to a website that competes with you, or to a Google employee. Another useful test is to ask, “Does this help my users? Would I do this if search engines didn’t exist?”

- Think about what makes your website unique, valuable, or engaging. Make your website stand out from others in your field.

So how do you avoid deceiving your users and create a high-quality site? Google gives a list of things not to do in its “quality guidelines,” which should be a roadmap for your website. For example, don’t use automated tools to build your webpages. And avoid duplicate content as much as possible.

The guidelines also include some pretty basic lessons on spam.

But even if you consider your website and business to be on the up and up, you can still unknowingly get yourself into trouble.

Why? Perhaps you didn’t study the webmaster guidelines closely enough and accidentally got yourself into a link scheme. Maybe it was the guest posting service you hired that got you a penalty. Or, it could be that you hired a cheap SEO service that put your website on a bad trajectory.

Even the savviest of businesses have been caught up in bad SEO practices. So if you do happen to get a drop in rankings or even a manual penalty, don’t feel bad. Just make sure you hire the right SEO professional to fix it.

What Is a Google Manual Penalty?

A manual penalty is reserved for those sites that are violating Google’s webmaster guidelines. This is a very specific action that an employee of Google applies to your site in particular. (This contrasts with an algorithm, which might impact or “penalize” many sites.)

Google explains why manual actions exist:

Ever since there have been search engines, there have been people dedicated to tricking their way to the top of the results page. This is bad for searchers because more relevant pages get buried under irrelevant results, and it’s bad for legitimate websites because these sites become harder to find. For these reasons, we’ve been working since the earliest days of Google to fight spammers, helping people find the answers they’re looking for, and helping legitimate websites get traffic from search. …

Our algorithms are extremely good at detecting spam, and in most cases we automatically discover it and remove it from our search results. However, to protect the quality of our index, we’re also willing to take manual action to remove spam from our search results.

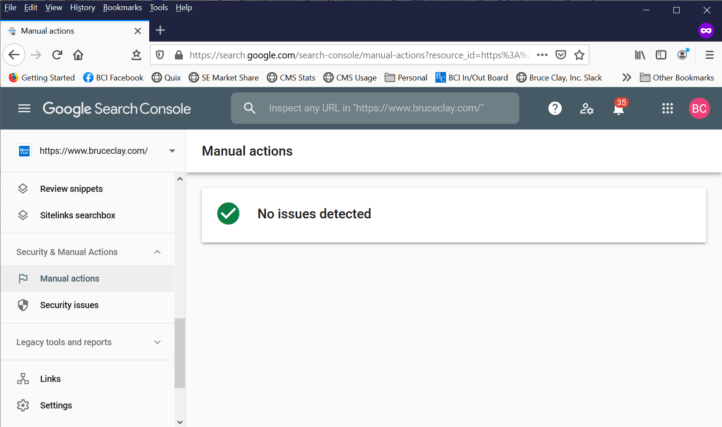

When Google doles out a manual action against your site, you will receive a message from Google.

Of course, you can check the Manual Actions report at any time in Google Search Console. If you’re in the clear, it looks like this:

And Google gives some helpful information on that here:

Manual actions can be detrimental to your website. Just listen to former Googler Fili Wiese talk about how having one stops the growth of your website:

So it’s important to address these manual action notices right away. You can find more information from Google on how to address those here.

How Google’s Algorithm Updates “Penalize”

Recently, I wrote about why sites lose rankings. Algorithm changes are one of those reasons. Keep in mind that Google makes more than 3,000 improvements to Search each year, including frequent algorithm updates. And the ranking algorithms consider hundreds of different factors.

Changes to the ranking algorithm can include new factors being added, factors being reorganized, or factors being increased or weakened in strength. For example, the Page Experience update takes several preexisting ranking factors and combines them with new “core web vitals” to create a new ranking signal.

Then you throw Google’s AI into the mix — RankBrain, which helps deliver what it believes to be the most relevant results — and your rankings could change in an instant.

As a result, any websites that are not weathering the changes that Google is making may experience lost rankings and traffic. This may feel like a penalty. And, of course, you want to avoid Google penalties of all kinds. However, keep in mind that an algorithmic penalty is just Google trying to serve the highest quality sites on Page 1 of the search results.

If you’ve experienced this, the smart thing to do is to understand how your website can improve so that it’s more like the websites that are now ranking in your place. As I’ve always said: SEO should beat the competition, not the algorithm. So get up, dust yourself off, and start analyzing the top results.

If you need an expert SEO to help you address a Google ranking penalty or otherwise improve your website’s visibility, contact us for a free quote and consultation today.

FAQ: How can websites avoid Google penalties and improve their search engine rankings?

Avoiding Google penalties and optimizing search engine rankings are paramount for website success. As the dominant search engine, Google has established rigorous guidelines and algorithms to assess websites’ quality and relevance. Falling afoul of these guidelines can lead to penalties that drastically hamper visibility and traffic.

Understanding Google’s Algorithms and Guidelines

At the heart of Google’s ranking system lie complex algorithms that evaluate websites’ credibility, relevance, and user experience. Staying informed about these algorithms, such as Panda, Penguin, and Core Updates, is essential. Regularly monitoring Google’s official webmaster guidelines is crucial for staying aligned with their expectations.

Crafting High-Quality, Engaging Content

Content remains king, and websites must prioritize creating valuable, informative, and engaging content. Strive to address users’ queries comprehensively, utilizing relevant keywords naturally. Diversifying content formats, from articles and infographics to videos and podcasts, can enhance user engagement, signaling quality to search engines.

Nurturing a Healthy Backlink Profile

Building a robust backlink profile is a cornerstone of SEO success. However, the emphasis should be on quality over quantity. Acquiring authoritative, relevant backlinks from reputable sources can bolster a website’s credibility. Avoid dubious practices like link farms, as they can trigger severe penalties.

Prioritizing User Experience and Technical Excellence

User experience (UX) plays a pivotal role in ranking. Ensure your website is responsive, easy to navigate, and quick to load. Google’s Core Web Vitals have gained prominence, measuring page loading speed and interactivity. Optimizing these aspects not only prevents penalties but also enhances user satisfaction.

Monitoring, Analyzing, and Adapting

Regularly monitor your website’s performance using tools like Google Analytics and Google Search Console. These platforms offer valuable insights into user behavior, search queries, and technical issues. Analyze this data to identify areas for improvement and adjust your strategies accordingly.

Step-by-Step Procedure: Navigating Google Penalties and Enhancing Rankings

- Familiarize yourself with Google’s algorithms, including Panda, Penguin, and Core Updates.

- Stay updated on Google’s webmaster guidelines to meet their expectations.

- Create high-quality, informative, engaging content addressing user queries.

- Utilize relevant keywords naturally within your content.

- Diversify content formats to cater to different audience preferences.

- Build a strong backlink profile with authoritative, relevant sources.

- Avoid unethical practices like link farms or paid link schemes.

- Prioritize user experience by ensuring a responsive and navigable website.

- Optimize page loading speed and interactivity for better Core Web Vitals scores.

- Regularly monitor your website’s performance using Google Analytics and Search Console.

- Analyze user behavior, search queries, and technical issues from the collected data.

- Identify areas for improvement in content, user experience, and technical aspects.

- Make necessary adjustments to your strategies based on the insights gained.

- Continuously update and refresh your content to maintain relevance.

- Engage with your audience through social media and other platforms.

- Address negative user experiences and feedback promptly.

- Implement structured data markup to enhance search result appearance.

- Collaborate with reputable websites for guest posting and mutual backlinking.

- Regularly audit your website for broken links, technical errors, and outdated content.

- Stay informed about industry trends and algorithm updates to adapt your strategies.

22 Replies to “What Is a Google Penalty and How Do I Avoid It?”

I am reading all content they have to provide a good information about SEO, and google penalty tool is a very helpful they identify for google updates and algorithms.

The most convincing inspiration for the google discipline is the place where you embrace the spamming methodology or achieve something which is against the google arrangements.

Thanks for telling what is google penalty. I am always searching online for articles that can help me. There is obviously a lot to know about this. I think you made some good points in Features also. Keep working and share more posts, you are doing great job.

The most compelling motivation for the google punishment is the point at which you adopt the spamming strategy or accomplish something which is against the google agreements.

The biggest reason for the google penalty is when you take the spamming approach or do something which is against the google terms and conditions.

Really an awesome strategy and tips on google penalty and indexing. I have been getting lots of problem in indexing the articles

Awesome guide! This is such a great help to avoid penalty and work accordingly. Thank you for the knowledge sharing.

Awesome Content About Google Penality, it helps the people who work on the website for ranking in google.

It’s also important to know how a brand can protect their website avoiding Google penalties beside its progression.

This blog seemed to be a helpful one!!

Right. because if we generate continuously 150 backlinks/day for 15 days and there is nothing in the next 5 days then google will penalize you.

For a good website, you have to create good backlinks, then Google will not penalize.

Thank you, Bruce Clay, After your article, I believe I will follow your instruction and save my website from the penalty

Thanks, Bruce for sharing informative content with us. As I am a learner it helps me a lot in SEO. I just learn that we should have to save our websites from Google Penalty.

You have to need quality backlinks, On the contrary, you have to remove broken backlinks and also you have to do, apply the white hat SEO method.

A Google penalty is a great tool that helps us in our identity if we have been hurt by any Google algorithm update or by manual actions and supply insight which is used to fix the problem. There are few steps that we have to implement to fix the google penalty like Analyze the results, start link detox, start link detox boost, disavow bad links in (LRT) and etc.

Very Good Information about google Penalty.

Every blog is very valuable and very informative

You need to remove low quality backlinks & content to recover your website from Google Penalty.

Hey, Its is great article. I was knowing about the facts about the facts like google penalty. your article has clear my mindset totally and will be more careful about the things while doing it. Thank you so much for clearing the concept.

Thank you sir Bruce, we just checked all our Google webmaster for all our clients, found “No issues detected.”

LEAVE A REPLY