RankBrain: What Do We Know About Google’s Machine-Learning System? #SMX

SEO is very tactical and we always try to look behind the curtain of Google’s algorithms. So, it’s no surprise that we all want to know more about RankBrain. RankBrain is Google’s machine learning system that they confirmed out of the blue in October 2015.

In this session, we’ll learn about RankBrain via the studies done by our presenters.

Moderator:

Danny Sullivan, Founding Editor, Search Engine Land (@dannysullivan)

Speakers:

- Eric Enge, CEO, Stone Temple Consulting (@stonetemple)

- Marcus Tober, Founder/CTO, Searchmetrics Inc. (@marcustober)

Danny Sullivan says that a few months ago, Google said, “Oh hey, by the way, we have this new thing called RankBrain and it’s the third most important signal that factors into ranking.” SEOs asked, “Can you tell us about that?” Google said no. We still want to understand it.

Marcus Tober: Machine Learning Ranks Relevance

Tober’s company Searchmetrics studied Google results to understand:

- What RankBrain does

- How RankBrain works

- What RankBrain means for websites and SEO

He had two full-time people work for three weeks to collect the RankBrain data he is presenting here today.

Before we talk about details and key findings, it’s important to look at machine learning (ML) and artificial intelligence (AI) first. So they dug through patents and papers.

ML is not AI

Machine learning is an algorithm that improves over time. Deep learning aims to bridge the gap between ML and AI and it solves more complex problems. Human-like intelligence (AI) is the end game.

Examples of ML:

- The junk mail filter in your email inbox

- Photo recognition, like how Facebook recognizes you in a photo

- Recommendations in iTunes, Spotify, and Netflix

The limits of machine learning are shown in the recent news that Google’s machine learning system beat a human in the game Go:

- More than 2,500 years old

- Played by 40 million people worldwide

- Is Go really that hard? Well, actually yes.

- There are 10^50 possible moves in a chess game.

- There are 10^171 possible moves in a game of Go.

- Google’s program AlphaGo beat the world champion 10–0.

Deep learning and AlphaGo:

- Traditional A.I. methods, which analyze all possible positions, failed.

- AlphaGo uses deep neural networks across 12 different network layers.

- One neural network selects the next move to play. The other neural network predicts the winner of the game.

- AlphaGo has a lot to show us about RankBrain.

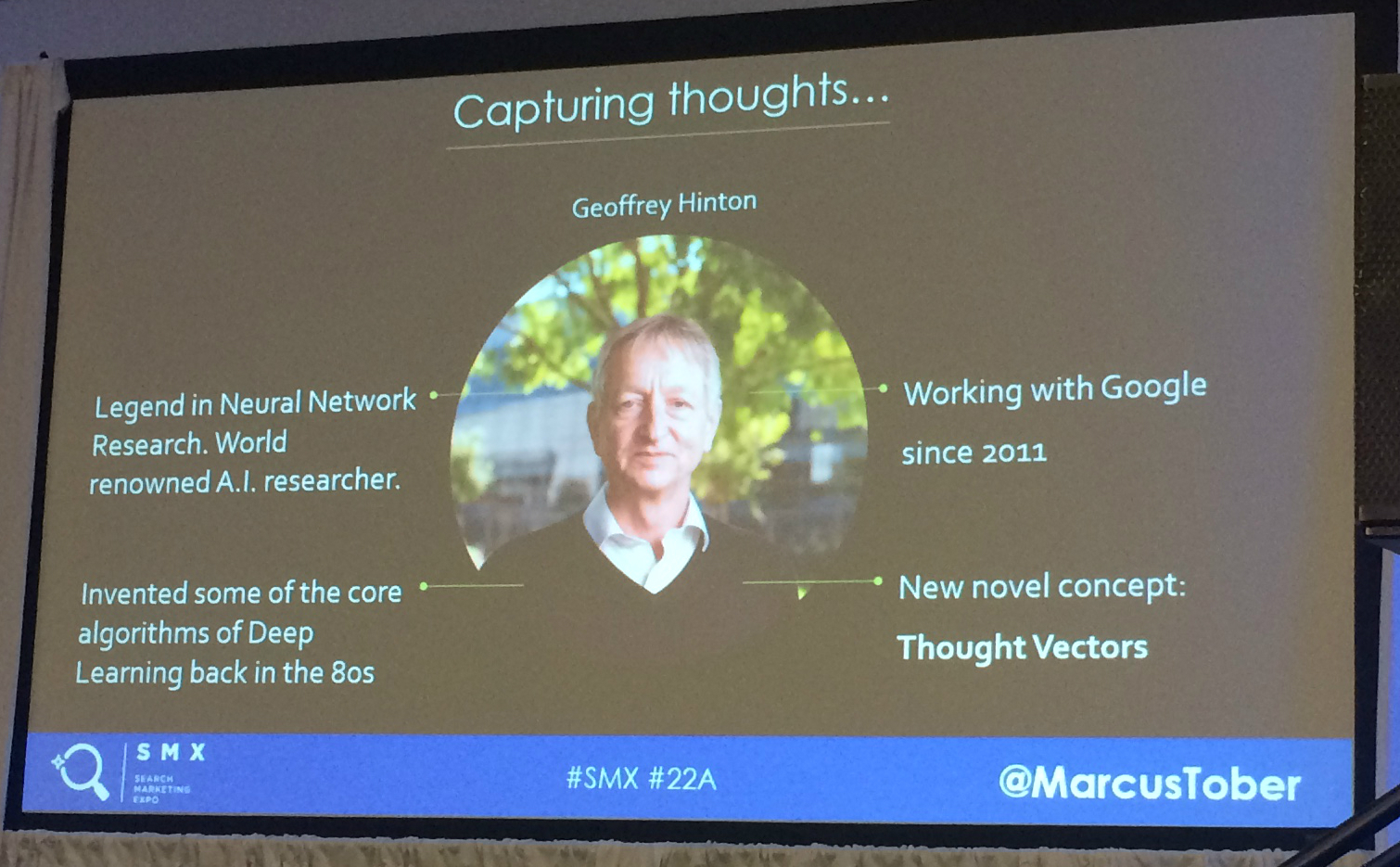

There’s one project that sticks out in their research of Google patents, and that’s the work of Geoffrey Hinton:

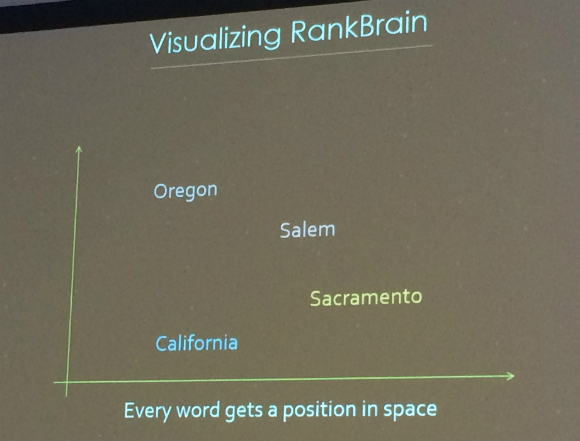

Here’s Hinton’s research in a simplified nutshell. Let’s talk about thought vectors. Imagine empty space. Every word in the world has a position and a proximity to other words. Google can map queries in search in that space so you get the proximity and distance of different queries.

When a query such as “What’s the weather going to be like in California?” is searched, RankBrain can interpret it as “Weather Forecast California.” Using training data, similar query sentences (with similar results) are closely positioned.

Searches for “credit card” and “debit card” will be close to each other in space. Results scoring is where Google results rank better based on proximity. Good results, with higher relevance, have higher proximity to each other.

The Searchmetrics Study

Here was the Searchmetrics Hypothesis: Traditional ranking factors can no longer make sense for typical organic rankings. This isn’t for all queries. Google said that Google RankBrain is not used on every query. When it is used, it is the third most important ranking signal.

- RankBrain concentrates on relevant content.

- RankBrain uses thought vectors to map relevant results to queries.

- Relevance score, then order rankings in real time.

Brief study background:

- Used top 30 results for Google U.S.

- Used 400K data points

- Used 3 different keyword sets with no overlap: loan, ecommerce, health

- The approach was to discover the ranking factors that were most important

- They tried to emulate RankBrain by adding scores to understand the content relevance

In order to do this analysis, they had to remove all previous understanding of ranking factors. This includes backlinks and internal links, keyword in title, word count and interactive elements used. They cleared the slate of their presupposed ranking factors in order to get the new ranking signals.

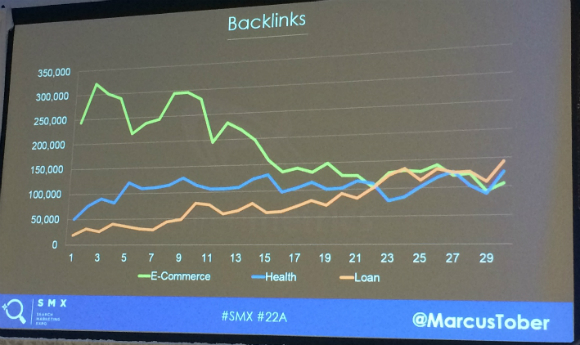

Backlinks:

We see that for ecommerce keywords, there is a positive correlation between rankings and backlinks. However, for health and loan queries, rankings and backlinks have a negative correlation.

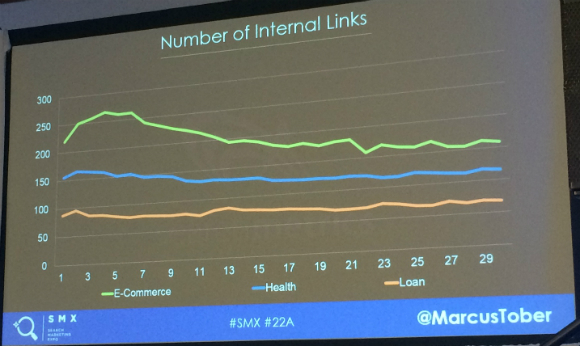

Internal links:

For loan queries, the average number of internal links is less than 100. For ecommerce, the average number of internal links is much higher.

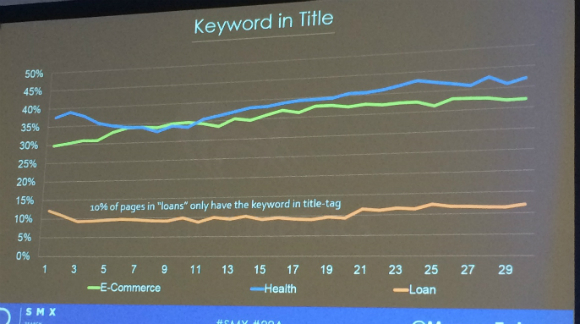

Keyword in Title:

Here’s a traditional SEO optimization tactic. In this analysis, they accounted for stemming and variations.

In the loan category, only 10 percent of pages have the keyword in the title tag.

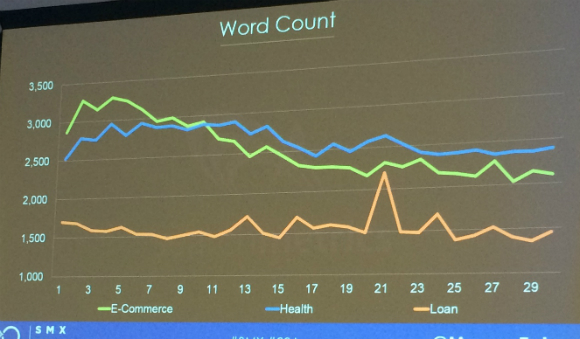

Word count and interactive elements:

For e-commerce and health, pages that are more successful have more content and more interactive elements.

Why do traditional ranking factors fail to explain these examples? If you look behind the curtains, you see that Google has changed a lot, with Hummingbird adding in machine learning.

Results of Searchmetric’s study

They emulated RankBrain and gave search results a relevance score. This score is based on how relevant a result is to a query. They found around 25 relevance ranking factors to assess relevance.

Relevance factors are different for each keyword, so Tober says they can’t provide a table of all the relevance factors.

For example, 9 out of 10 ecommerce websites have a keyword “add to cart” function above the fold. Rank #9 does not. However, it has the highest relevance score of the top 30 and that’s why it ranks.

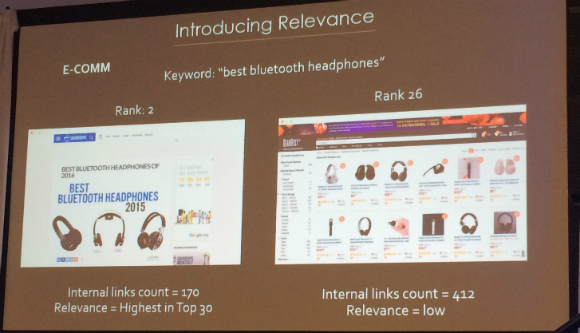

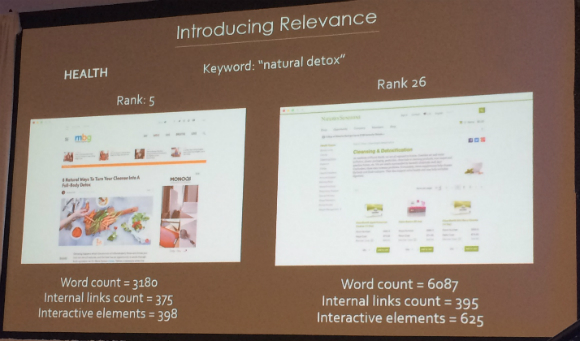

Another example: If you search for “best bluetooth headphones” then you’ll see:

See how many internal links for each result and compare their relevance scores.

And another example, for the keyword “natural detox” you’ll see the word count comparison for two results, with the higher ranking result having fewer words, fewer internal links and fewer interactive elements.

Content with a high relevance score matches user intention, is logically structured and comprehensive, offers a good user experience, and deals with topics holistically. Holistic means that other topics related to the topic are covered on this page.

Key Findings

- Top ranking factors are different depending on keyword set.

- Relevance ranking factors dominate results across all keyword sets.

- All previous examples can be explained by having a higher relevance score.

- This score overpowered other ranking factors, meaning that these pages ranked highly.

Outlook for SEO

- SEO is as important as ever, but it’s changing.

- RankBrain is not used on all queries. For example, short/popular queries with well-known results aren’t filtered by RankBrain if your content matches user/query intent.

- Relevance is crucial for good rankings, because RankBrain can detect how relevant your content is.

- Make sure your content matches user/query intent.

When Google already knows the best results, RankBrain is not used. He believes RankBrain is filtering long-tail queries. Relevance is crucial for good rankings and RankBrain can detect how relevant our content is.

The Future of Search

Tober says we’re in for an abundance of redundancy. He explains that machine learning and Searchmetrics share this philosophy, which he says applies for content creation, too:

- Incremental improvements through powerful data insights

- Using machine and deep learning to make sense of complex data

- A data-driven approach is necessary to make sense of all the redundancy online

Eric Enge: What Is RankBrain and How Does it Work

Eric had brain surgery in 2003. Does that make him a RankBrain expert? No, but his company Stone Temple Consulting did a study of Google results before and after RankBrain to see what’s changed.

Notable quote from the Bloomberg article announcement:

“RankBrain interprets language, interprets your queries, in a way that has some of the gut feeling and guessability of people.”

Google is trying to understand the true meaning of queries and do a better job of providing results that are relevant.

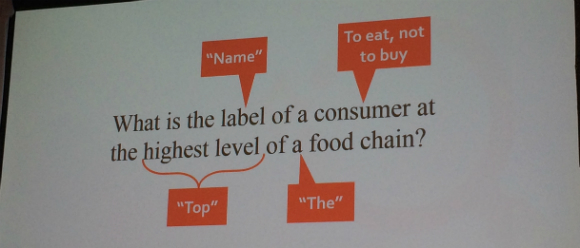

Some basic language analysis concepts figure in here. Take, for example, stop words. Google has traditionally stripped stop words out of a query or indexed page to simplify the language analysis.

But there are places where this practice doesn’t work. Take the query “the office” as an example. Someone wants the TV show, but Google may give results for your office. A more sophisticated language analysis might look for those two words capitalized in the middle of a sentence as a cue that this is a specific kind of “the office.”

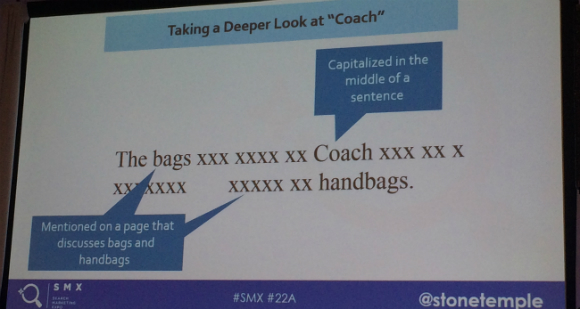

Another example is “coach,” which sometimes refers to a brand. Google may have had to manually patch the results if they find poor engagement on SERPs for a query like this. Here’s a way as humans we might find that the Coach brand is being referenced, which Google ultimately wants to be able to recognize algorithmically:

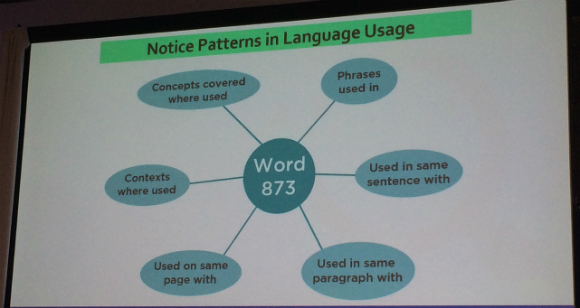

RankBrain is trying to understand different kinds of relationships in language. On the topic of RankBrain, Gary Illyes told Enge it had to do with “being able to represent strings of text in very high-dimensional space and ‘see’ how they relate to one another.”

RankBrain gets at the context, what other words are used in the same page, same paragraph, same sentence, etc. It can notice patterns in language usage:

Along with the example of the stop word “the” affecting the query, Enge also shows how certain words were hard for Google before RankBrain:

- “The” — classic stop word, but in some cases critical to phrase meaning (e.g., “The Office”)

- “Graphic” — could relate to violence, language, or design (meaning determined by context)

- “Without” — negatives traditionally ignored by Google, but in some cases critical to meaning of page

Example queries:

This is the example offered the the Bloomberg article.

Another troublesome example query that Gary Illyes shared in an interview with Enge: “Can you get 100% score on Super Mario without walkthrough?”

The word “without” was traditionally taken out of a query, so now the user isn’t getting the answer they were looking for. This is an example of how Google was able to improve the results with RankBrain.

Stone Temple Consulting’s Study

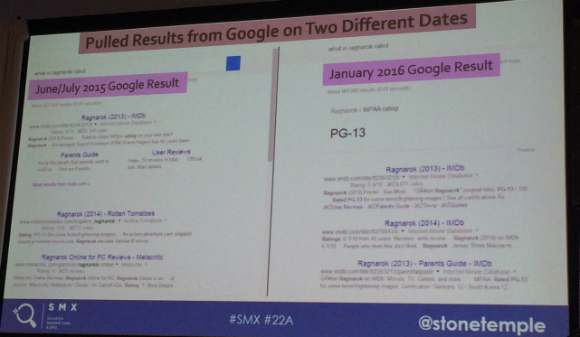

From their database of 500K queries, they looked for examples of queries that in June/July 205 Google didn’t understand and compared it to January 2016 results.

In the left example, you see that Google doesn’t answer the query about Ragnarok, and in the right we see that Google did answer the question.

To find queries that Google misunderstood, they removed a number of queries that either were not clear what the searcher meant, or that didn’t make sense in other ways. Here’s their end aggregate analysis:

- Total Queries We Found: 163

- Results Improved: 89

- Results Improved (%): 54.6 percent

How did the results improve? The 89 “better results” the study found were improved in three basic ways:

- 39 results (43.8 percent) had an improved answer box.

- 2 results (2.2 percent) added maps.

- 48 results (53.9 percent) had improved search results pages.

RankBrain did a good job of parsing the query and instructing Google’s back-end retrieval systems to get the right results. Enge believes that Google can pass a better query not just to web results but also to featured snippets and other algorithms.

Enge shows a January 2016 SERP result of Abz Love in Wikipedia for the query “who is abs.” He explains that Google now figures out to put weighted importance on the “who is” portion of the query, even though “abs” changed the way he spells his name.

Types of improvements they found included:

- Phrase interpretation improved (e.g., “YouTube Plus”)

- Word interpretation improved (e.g., “weak”)

- Good user query (e.g., “convert glory into an adjective”)

- Poor user query (e.g., “convert into million dollars”)

Phrase interpretation and word interpretation improved. About two-thirds of the time, the user query was pretty clear to a human and Google didn’t understand, while the other third of the time, the query wasn’t clear originally.

Impact on SEO

There’s a growing importance on relevance and context in ranking. We’re seeing a shift in that direction, although he’s not sure that all of that is due to RankBrain. He doesn’t see a lot of direct impact on SEO from RankBrain right now. The biggest change happening in SEO is with content and comprehensiveness and relevance.

Summary of key SEO implications:

- More obscure phrases may bring up your page.

- Yes, you still need to do keyword research.

- You should increase your emphasis on truly natural language.

Danny Sullivan says his takeaway is, what are you really going to do any differently? Create great content for humans, which Google will reward like they’ve always said they do.

For more information regarding Google’s RankBrain, read our post on The REAL Impact of RankBrain on Web Traffic.

26,000+ professionals, marketers and SEOs read the Bruce Clay Blog

Subscribe now for free to get:

- Expert SEO insights from the "Father of SEO."

- Proven SEO strategies to optimize website performance.

- SEO advice to earn more website traffic, higher search ranking and increased revenue.

4 Replies to “RankBrain: What Do We Know About Google’s Machine-Learning System? #SMX”

What you think about CTR in case of Rankbrain. Is there any impact of CTR over search ranking? Thanks for sharing this Virginia

Rankbrain’s focus is on understanding query intent, so in and of itself, has no direct impact on CTRs. It’s output can have an impact on CTR, however. If it does it’s job well, the query results should more closely match the searcher’s intent, therefore better results are at the top, garnering more clicks and impacting CTR.

Hi Emily, thank you! Did you read Larry Kim’s RankBrain and CTR post over at Moz, by chance? :) https://moz.com/blog/does-organic-ctr-impact-seo-rankings-new-data “RankBrain may just be the missing link between CTR and rankings.” Larry and his data have me convinced. And I’m remembering that this CTR and rankings connection was predicted in Rand Fishkin’s 2015 Pubcon keynote https://www.bruceclay.com/blog/seo-in-a-two-algorithm-world-pubcon-keynote-by-rand-fishkin/ so I could see the connective tissue lying in RankBrain, sure. I’m not an engineer, but it all follows to me.

LEAVE A REPLY