7 SEO Best Practices You Can’t Ignore if You Want to Rank in the Organic Search Results

SEO is a fast-moving industry that is always evolving. One Google announcement, a current event or a change in the competitive landscape can alter how you go about your SEO strategy in an instant.

But, we do have best practices that stand the test of time. The way we go about doing those best practices might evolve, but they are still rooted in the fundamentals of good SEO. And, when following these best practices, you can better weather any storms that may come your way.

Here are seven SEO best practices you can’t ignore if you want to compete in the organic search results.

- Create the Right Type of Content

- Meet or Beat the Top-Ranked Content

- Create a Good User Experience

- Optimize Your Images

- Silo Your Website

- Focus on Link Earning Not Link Building

- Manage Duplicate Content

1. Create the Right Type of Content

Every search query/keyword has a different intent behind it — what the search engine user is trying to do. Google knows this and serves up different types of content to meet those needs.

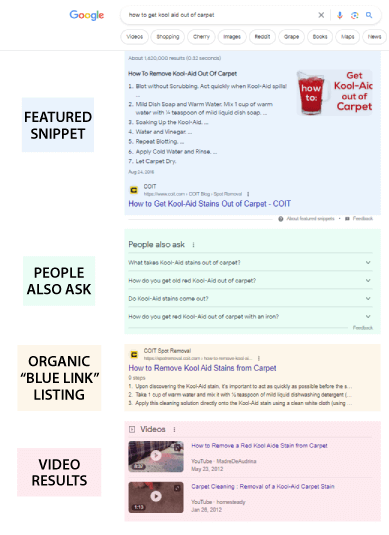

There will always be the blue links, which lead to webpages. But often, there are other types of content as well, like video, images and much more. This is what we call engagement objects – SERP features that engage and ultimately make money for Google.

An engagement object is a SERP feature shown on a search engine results page (SERP) that falls outside of the traditional organic search results (i.e., the blue links).

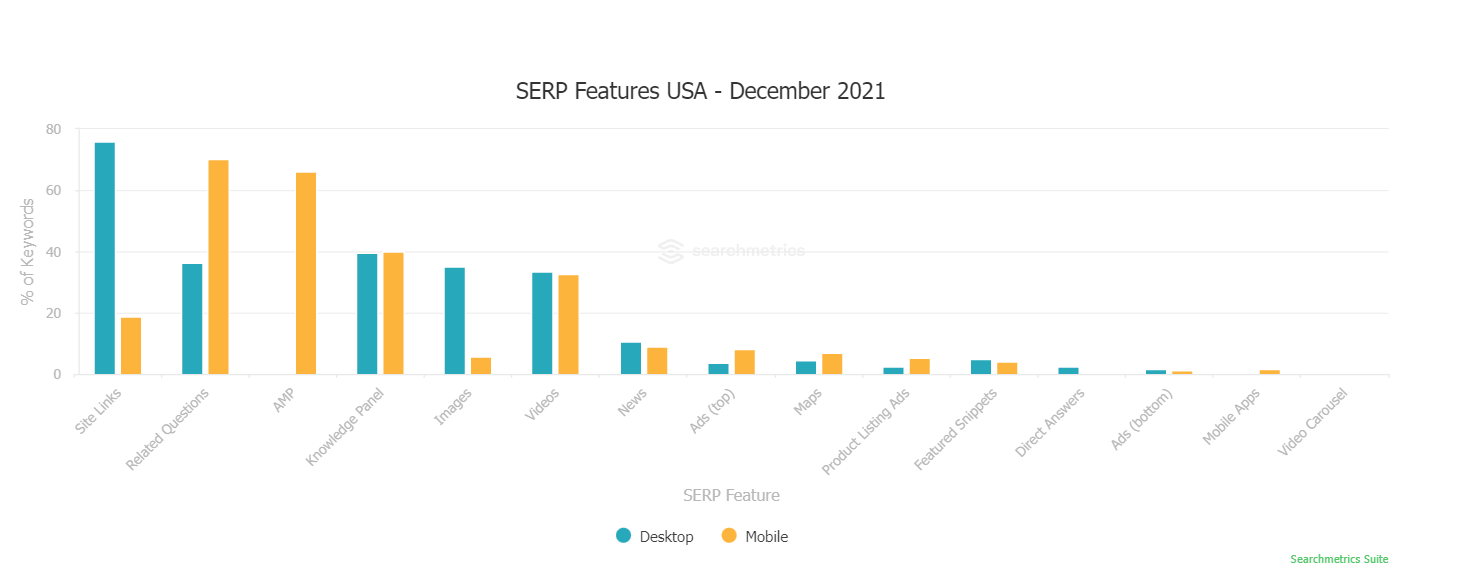

Searchmetrics keeps track of the most common SERP features that show up throughout the year with its SERP Features Monitor.

So, how do you create and optimize the right type of content to match the search query? Through what we call a whole-SERP SEO strategy.

A whole-SERP SEO strategy analyzes the features that show up most in the search results for target keywords and then optimizes for them.

The first step is to take the keywords you want to rank for, then analyze the content in the search results that is showing up for them. Is it mostly blue links? Are there videos? Images? What else?

This will help you set the content strategy for the type of content you are going to create. A whole-SERP SEO strategy gives you a roadmap for the type of content you need in your SEO program.

This strategy can also help combat the phenomenon of “zero clicks.” A zero-click search result happens when Google is able to answer a search query or facilitate an action right within the search results page.

2. Meet or Beat the Top-Ranked Content

Knowing what type of content to create is the first step. How you create and optimize the content for search engines and users is the next step.

SEO is a game of being the least imperfect. I say least imperfect because no one is going to optimize a piece of content precisely to Google’s algorithms. So, all content in the search results is imperfect when it comes to optimizing.

That said, the goal is to be least imperfect compared to your competition. All SEO programs should work to beat the competition, not the algorithm.

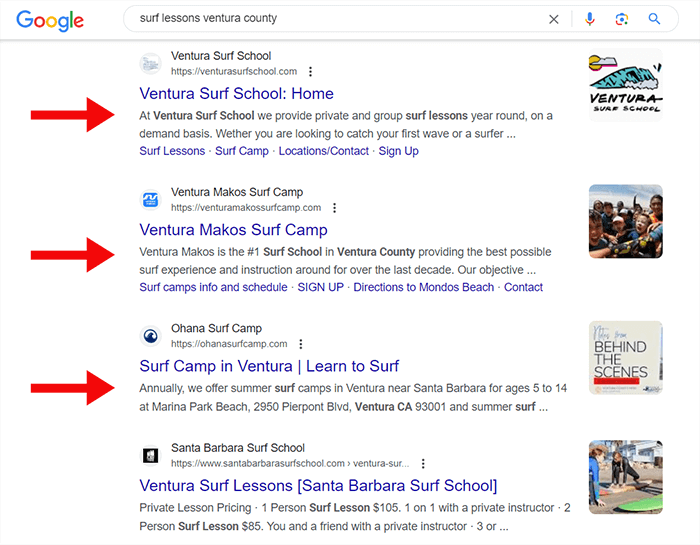

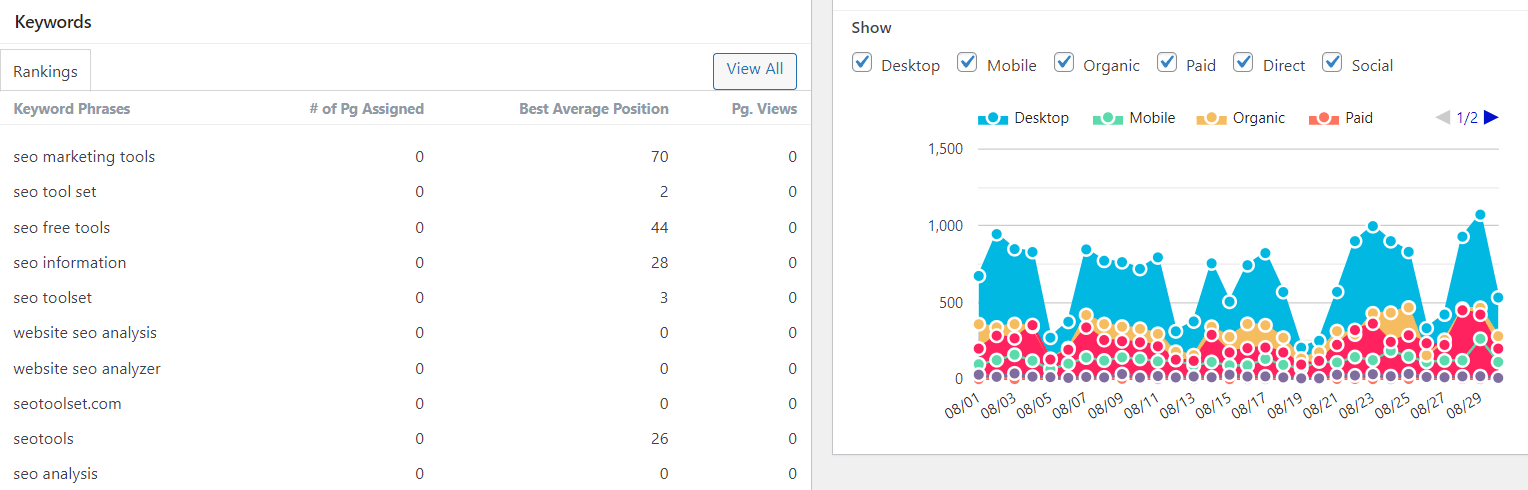

Here, you want to understand what makes the top-ranked content for your keyword tick. Start analyzing the top results for each keyword. Of course, you could do this manually, but SEO tools are going to save you a lot of time and effort here.

For example, you could use an SEO tool like our Multi-Page Information tool (free version) and see the on-page SEO factors of multiple competitors.

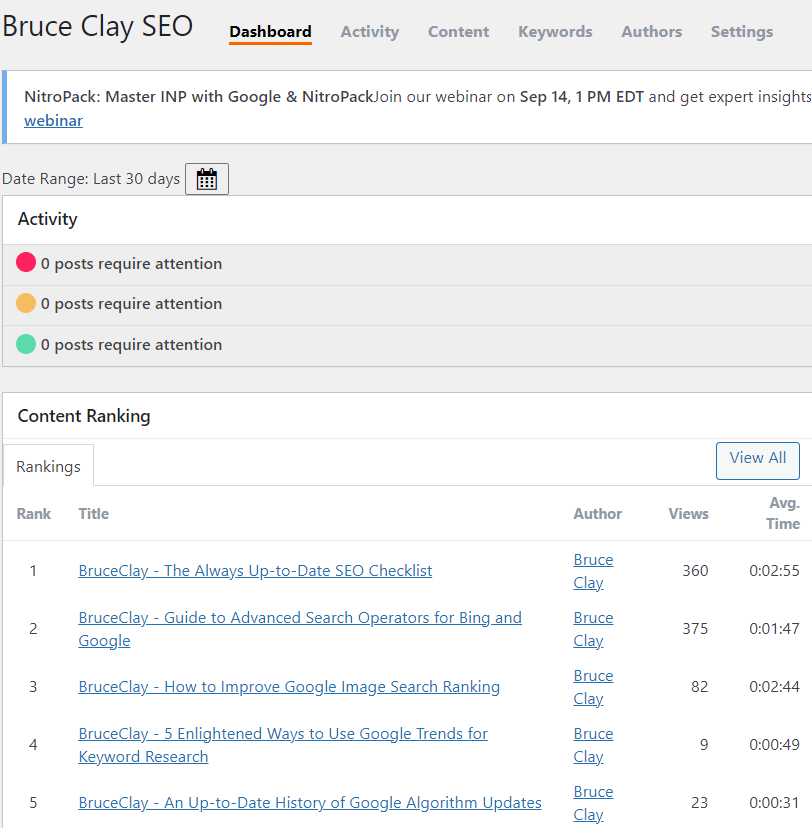

Or, if you are using a WordPress site, you can use our WordPress SEO plugin to get real-time data on the top-ranked pages for your keywords.

That means customized SEO data for your content versus following best practices that are typically generic.

It also means knowing how many words to include in your meta tags and your body content, plus the readability score — all based on the top-ranked content.

These types of tools will help you understand how to optimize the content you are creating. But you should also look closer at the nature of the top-ranked content as well before you start writing.

Google values experience, expertise, authoritativeness and trustworthiness (E-E-A-T) as outlined in its Search Quality Evaluator Guidelines. A component of E-E-A-T is to have shared attributes in the information you are sharing with the top-ranked or highest-quality webpages on the topic.

In other words, Google says in its Search Quality Evaluator Guidelines:

Very high quality MC should be highly satisfying for people visiting the page. Very high quality MC shows evidence of a high level of effort, originality, talent, or skill. For informational pages, very high quality MC must be accurate, clearly communicated, and consistent with well-established expert consensus when it exists. Very high quality MC represents some of the most outstanding content on a topic or type that’s available online. The standards for Highest quality MC may be very different depending on the purpose, topic, and type of website.

I discussed what this means practically in The Complete Guide to the Basics of Google’s E-E-A-T.

For instance, say you have content that states that blueberries can cure cancer. Even if you feel you have the authority to make this claim, when competing against YMYL content, you will not be considered an expert for a query about cancer because the claim is not supported elsewhere.

And don’t forget: Once you have created a great piece of content, don’t skimp on the headline. A good headline can get you more clicks and drive more traffic than a lackluster one.

Much of the advice and tools I’ve discussed so far apply to getting data for and optimizing standard web pages (the blue links). If you are up against videos, for example, you will also need to examine them closely and think about your YouTube SEO efforts.

3. Create a Good User Experience

Once a person reaches your website from the organic search results, will they have a good experience?

You should care about user experience because you want to make sure you get the most out of the traffic that you send to your website. If all those efforts lead to a bad webpage and the user quickly leaves, then you have wasted your time and money.

Google wants to make sure websites are providing a good user experience, too. So Google has developed ranking signals to ensure only the websites that provide the best experience will compete on Page 1 of the search results.

One thing that Google may look at is when a large percentage of users from the search results go to your webpage and then immediately click back to the search results. This could be an indication of a poor user experience and may impact your future rankings.

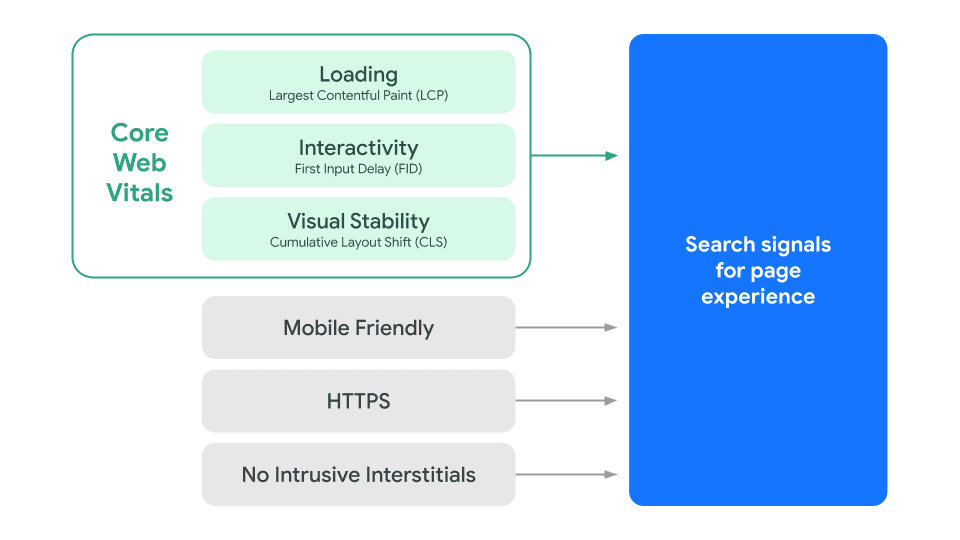

Then you have the page experience algorithm update, which hit in 2021 and combines pre-existing ranking signals such as:

- Mobile-friendliness

- HTTPS (secure websites)

- Non-intrusive interstitials

… with new rankings signals that include what Google calls “core web vitals.” Core web vitals look at things like:

- Page load performance

- Responsiveness

- Visual stability

There is much to do in this area to optimize a website for user experience. You can download our e-book: Google’s Page Experience Update: A Complete Guide, to learn more about how to get your website up to speed.

4. Optimize Your Images

You need to optimize all your content assets so that they have the opportunity to rank. That includes images.

Visual search and Google Images have been a focal point for Google for some time. More and more images are showing up in response to search queries. seoClarity reports that in 2021, more than 55% of keywords result in image results.

Google wants to rank great images, but it also wants to ensure those images are within the context of great content, too. I wrote about this in an earlier article on how to improve image search ranking:

We’ve all had the experience of finding an image and clicking through to a not-so-great webpage. To prevent this, the Google Images algorithm now considers not only the image but also the website where it’s embedded.

Images attached to great content can now do better in Google Images. Specifically, the image-ranking algorithm weighs these factors (besides the image itself):

Authority: The authority of the webpage itself is now a signal for ranking an image.

Context: The ranking algorithm takes into account the context of the search. Google uses the example of an image search for “DIY shelving.” Results should return images within sites related to DIY projects … so the searcher can find other helpful information beyond just a picture.

Freshness: Google prioritizes fresher content. So ranking images will likely come from a site (a site in general, but we believe the actual webpage in question) that’s been updated recently. This is probably a minor signal.

Position on page: Top-ranked images will likely be central to the webpage they’re part of. For example, a product page for a particular shoe should rank above a category page for shoes.

Of course, there are all sorts of optimization techniques you can do to improve image ranking. Read more here for 17 important ways you can optimize your images for search, which includes:

- Tracking image traffic

- Creating high-quality, original content

- Using relevant images

- Having a proper file format

- Optimizing your images

- Always creating Alt text

- Making use of the image title

- Creating an image caption

- Using a descriptive file name

- Implementing structured data

- Considering image placement on the page

- Analyzing the content around the image

- Being careful with embedded text

- Creating page metadata

- Ensuring fast load time

- Making sure images are accessible

- Creating an image sitemap

5. Silo Your Website

Creating and optimizing quality content is really important. But just as important is how you organize all the content on your website.

Google has indicated more than once that it not only looks at the quality of a webpage but also the site as a whole when ranking content.

In its Search Engine Optimization Starter Guide, Google says:

Although Google’s search results are provided at a page level, Google also likes to have a sense of what role a page plays in the bigger picture of the site.

In its Search Quality Evaluator Guidelines, Google says it looks at the website as a whole to determine if the website is an authority on topics.

So what does this mean? When someone searches for something on Google, one of the ways that the search engine can determine the most relevant webpage for a search is to analyze not only the webpage but also the overall website.

Google may be looking to see if a website has enough supporting content for the keywords/search terms on the website overall. Enough, clearly organized, information-rich content helps create relevance for a search.

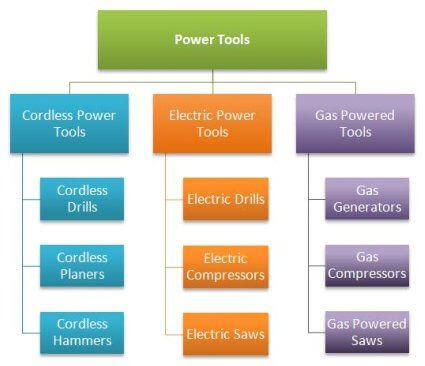

We call this SEO siloing. SEO siloing is a way to organize your website content based on the way people search for your site’s topics. Its goal is to make a site relevant for a search query so that it has a better chance of ranking.

The goal of SEO siloing is to build a library of content around primary and long-tail keywords on your website and then connect them via your internal linking structure.

Google advocates for what SEO siloing does. In its Search Engine Starter Guide, Google says:

Make it as easy as possible for users to go from general content to the more specific content they want on your site. Add navigation pages when it makes sense and effectively work these into your internal link structure. Make sure all of the pages on your site are reachable through links, and that they don’t require an internal “search” functionality to be found. Link to related pages, where appropriate, to allow users to discover similar content.

There is a lot that goes into SEO siloing, and I recommend reading these articles:

- 5 Times When SEO Siloing Can Make or Break Your Search Engine Rankings

- A Jam-Packed Guide on Internal Linking for SEO

6. Focus on Link Earning Not Link Building

Link building is not a numbers game anymore. Search engines want to see that a website has quality, relevant links.

Google’s John Mueller confirmed this in a video, stating that:

“We try to understand what is relevant for a website, how much should we weigh these individual links, and the total number of links doesn’t matter at all. Because you could go off and create millions of links across millions of websites if you wanted to, and we could just ignore them all.”

You can view that video clip here:

We have seen client websites with fewer but more quality inbound links outperform their competition. So, what does “link earning” look like?

- Avoiding all those known spammy link-building tactics, mass email requests for links, purchasing links, participating in link farms, etc.

- Understanding the “gray areas” of what could be considered link spam, such as paid guest posting.

- Creating quality content that earns relevant links.

- Getting creative with how you earn links and being diligent about how you maintain them.

You can learn more about how to create a good link-earning SEO program in our e-book, “The New Link Building Manifesto: How To Earn Links That Count.” In it, you’ll find a roadmap for earning quality, relevant links, including 50 ways to earn links safely and effectively.

7. Manage Duplicate Content

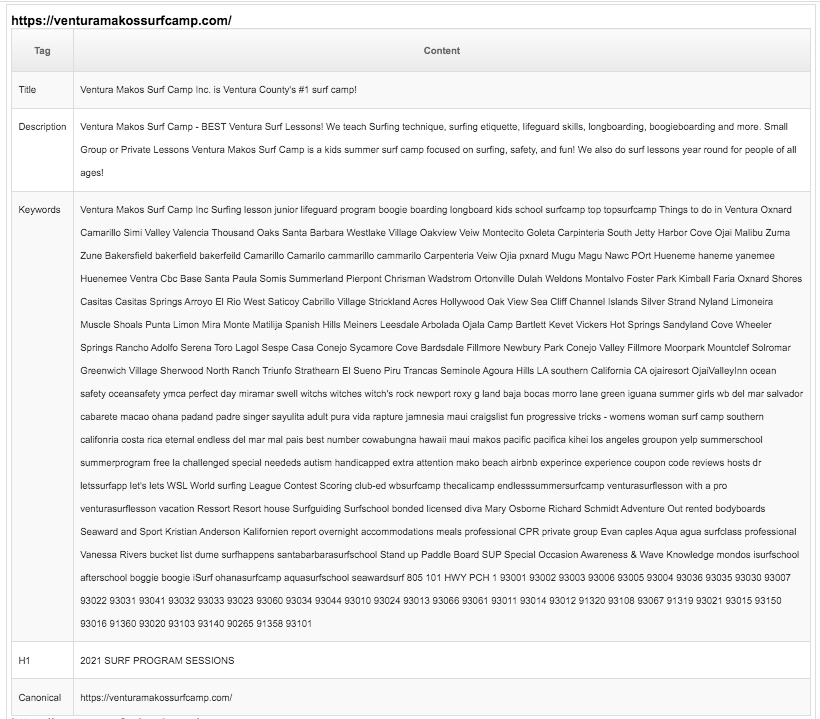

Duplicate content can impact your rankings. And, depending on what type of duplicate content you have on your website, it can even trigger a manual action by Google if it’s considered spam.

There are two types of duplicate content:

- Duplicate content involving webpages on your site only

- Duplicate content involving webpages on your site and other sites

When you have duplicate content involving your website and other sites, Google may flag this as spam (for example, if your site is scraping or copying content from another).

The good news is that most websites are only dealing with non-spammy duplicate content on their own websites. This is when you have content (two or more webpages) that is the same or similar.

This can impact your rankings. When Google is presented with two of your webpages that appear to be too similar, the search engine will choose the page it believes is the most relevant and filter the other page or pages out of the results.

Google’s Mueller explains in a video:

“With that kind of duplicate content it’s not so much that there’s a negative score associated with it. It’s more that, if we find exactly the same information on multiple pages on the web, and someone searches specifically for that piece of information, then we’ll try to find the best matching page.

So if you have the same content on multiple pages then we won’t show all of these pages. We’ll try to pick one of them and show that. So it’s not that there’s any negative signal associated with that. In a lot of cases that’s kind of normal that you have some amount of shared content across some of the pages.”

You can watch that video clip here:

So, what to do? We’ve found that the most common types of duplicate content are the following:

- Two site versions

- Separate mobile site

- Trailing slashes on URLs

- CMS problems

- Meta information duplication

- Similar content

- Boilerplate content

- Parameterized pages

- Product descriptions

- Content syndication

And of those common types, we tend to see duplicate meta information as a top culprit. So, it’s important to always create unique meta tags.

If you’re on a WordPress site, you can use our WordPress SEO plugin to help monitor and detect duplicate content issues in your meta tags.

For more on how to address the common types of duplicate content on your website, see Understanding Duplicate Content and How to Avoid It.

Closing Thoughts

These SEO best practices are not the end of your SEO work, but they are the beginning of creating a winning SEO strategy that will respond to any curve ball that comes your way.

Schedule a free 1:1 consultation to learn more about how you can boost your SEO profile and maximize your visibility online.

FAQ: How does duplicate content impact search rankings, and what types of duplicate content should be managed?

Duplicate content has long been a cause for website owners and digital marketing professionals who worry that duplicated material could negatively affect search rankings. Search engines struggle to provide users with relevant and original information that meets user search queries if duplicates appear within results pages.

When search engines encounter duplicate content, they face a dilemma. Search engines must determine which version is more relevant and worthy of being given priority in ranking. They may punish pages that contain duplicate content by downranking them or penalizing their rankings accordingly. This can adversely affect website visibility and organic traffic, making it crucial for webmasters to address duplicate content issues.

Website owners should be aware of and manage several types of duplicate content. The first type is identical content found on multiple pages within the same website. This can occur when a website generates multiple URLs for the same content, leading to duplicate versions. Search engines might struggle to decide which URL to prioritize, potentially diluting the page’s ranking potential.

Another type of duplicate content is syndicated or copied content from other websites. While syndication can be a legitimate practice, ensuring that the content is properly attributed and adds value to the website is essential. Otherwise, search engines may consider it duplicate and penalize the website for duplicate content.

Product descriptions and e-commerce websites often face challenges with duplicate content. Similar products may have identical or nearly identical descriptions, leading to duplicate content issues. It is advisable to provide unique, compelling descriptions for each product to avoid these problems and improve search rankings.

Finally, duplicate content can also arise from printer-friendly versions, mobile versions, or session IDs appended to URLs. These variations in URLs can confuse search engines and result in duplicated content. Implementing canonical tags and managing URL parameters can help resolve these issues and ensure search engines understand the preferred version of the content.

To manage duplicate content effectively, website owners should take proactive steps. Conducting regular content audits to identify and address duplicate content is essential. Utilizing tools such as site crawlers and duplicate content checkers can aid in this process by scanning the website for duplicate instances and providing recommendations for improvement.

Once identified, duplicate content issues can be resolved through various means. One approach is consolidating duplicate pages by redirecting or consolidating the content under a single URL. Implementing 301 redirects or rel=canonical tags can help guide search engines to the preferred version of the content and consolidate ranking signals.

For e-commerce websites, ensuring unique product descriptions and optimizing metadata can go a long way in avoiding duplicate content penalties. Additionally, monitoring syndicated content and implementing proper attribution can help maintain a healthy balance between original and duplicate content.

Regularly monitoring website performance, traffic patterns and search engine rankings is crucial for detecting any potential duplicate content issues. Prompt action and continuous improvement will help maintain strong search rankings and enhance the overall user experience.

Duplicate content can significantly impact search rankings by confusing search engines and diluting ranking potential. Various types of duplicate content, such as identical pages within a website, syndicated content and product descriptions, should be managed effectively.

Step-by-Step Procedure:

- Conduct a comprehensive content audit to identify instances of duplicate content on your website.

- Use site crawlers or duplicate content checkers to scan the website and identify duplicate content.

- Prioritize resolving duplicate content issues on the website’s pages before addressing external sources.

- For identical content found on multiple pages within the same website, determine the primary URL and implement 301 redirects from the secondary URLs.

- Ensure that all syndicated or copied content from other websites is properly attributed and adds value to your website.

- Review product descriptions on e-commerce websites and make them unique and compelling for each product.

- Regularly monitor the website for printer-friendly versions, mobile versions, or session IDs appended to URLs, and implement canonical tags to indicate the preferred version of the content.

- Manage URL parameters to eliminate duplicate content issues caused by session IDs or tracking parameters.

- Utilize tools such as site crawlers and duplicate content checkers to scan the website for new instances of duplicate content periodically.

- Review the recommendations provided by the tools and implement necessary changes to address duplicate content.

- Consolidate duplicate pages by redirecting or consolidating the content under a single URL.

- Implement 301 redirects from duplicate pages to the preferred version to guide search engines and consolidate ranking signals.

- Ensure that each product on e-commerce websites has a unique description and optimized metadata.

- Monitor syndicated content and verify that proper attribution is in place to differentiate it from duplicate content.

- Continuously monitor website performance, traffic patterns and search engine rankings to identify any new duplicate content issues.

- Take prompt action to resolve duplicate content problems as they arise.

- Regularly review and update your content strategy to avoid unintentional creation of duplicate content.

- Provide a seamless user experience by eliminating duplicate content, which can confuse and frustrate visitors.

- Stay updated with search engine guidelines and best practices to address duplicate content effectively.

- Continuously improve your website’s content and ensure it remains unique, relevant and valuable to users.

26,000+ professionals, marketers and SEOs read the Bruce Clay Blog

Subscribe now for free to get:

- Expert SEO insights from the "Father of SEO."

- Proven SEO strategies to optimize website performance.

- SEO advice to earn more website traffic, higher search ranking and increased revenue.

7 Replies to “7 SEO Best Practices You Can’t Ignore if You Want to Rank in the Organic Search Results”

Invaluable insights on SEO best practices that are impossible to ignore! Your guide offers a comprehensive approach, covering everything from keyword optimization to user experience. The emphasis on adaptability to algorithm changes and the importance of quality content align with the evolving digital landscape. A must-read for anyone serious about staying ahead in the world of SEO. Thanks for distilling these essential practices into actionable tips!

So much great information presented properly. My favorites are the SERP Feature image, very good visual to remind clients what and why we need certain content. The other is the Silo Structure Image, which always helps a client understand why we are asking them for certain content and continue with pages vs always pushing the next blog.

Great article Bruce. However, I feel you missed the Voice Search Optimization. Voice search is becoming more popular, thanks to the proliferation of voice assistants like Alexa, Google Assistant, and Siri. Optimizing your content for voice search by focusing on natural language, question-based queries, and local SEO can give you an edge in this growing segment of search.

What do you think? I’m Looking forward to more insightful content!

Hi Devjeet,

Thanks for your insight! We agree that question-based search is the future, and that on any voice communication device that voice is a preferred method of query. Yes, we need to optimize for voice, but the focus will be the question query and the expert answer. And of course we cannot forget video — not just an answer to a query, but showing the process is important.

I regularly follow you and read your texts and I am simply amazed by the tone in which you write some texts, I can read them again and again and again

LEAVE A REPLY