When the Answer Is Wrong: What We Risk When We Stop Questioning

Two men set out for a short hike above Vancouver, BC, trusting the ChatGPT-generated advice they received on the trail they were about to embark upon.

Wearing only their sneakers as foot protection, they soon realized they were not prepared. Stranded in snow and underprepared, they had to be rescued by local search-and-rescue teams hauling up boots and supplies.

This is not the first time it’s happened, according to this article at Futurism. These men simply did what millions of people are now doing every day: asking an AI model for answers and assuming it’s 100% correct.

While a wrong turn on a mountain might be corrected with a rescue, other scenarios carry heavier consequences.

ChatGPT and other large language models sound authoritative, and that’s precisely the problem in a world where people aren’t double-checking the answers.

- The Veil of Authority

- The Octopus Date

- Who Owns the Mistakes?

- The Cost of AI Dependence

- Drowning in AI Slop

- The Trade-Off We Can’t Ignore

- Quick Solutions

- FAQ: How do I verify AI-generated content for accuracy before using or publishing it publicly?

- About Us

The Veil of Authority

People trust LLMs like ChatGPT for a variety of reasons — first and foremost because they believe the technology is accurate.

After all, they’re able to compress knowledge into quick, polished answers and they sound totally confident in the process.

But behind this is a lack of understanding of how the technology actually works.

LLMs aren’t experts in the human sense. They are trained on vast amounts of human-generated data, which helps them predict answers; obviously, they’re not always right.

LLMs can sound authoritative because they’ve picked up on the style of how experts explain things.

Really, they’re just imitating the style of expertise, and predicting the next most likely word in a sequence, based on the probabilities that have been learned from training.

So AI doesn’t inherently know if an answer is true or false. When it hallucinates, it’s because it’s generating something that sounds plausible, but it isn’t anchored in truth.

It’s this confidence that throws people for a loop.

The Octopus Date

When Financial Times columnist Tim Harford asked ChatGPT to explain why it had sent him on a blind date with an octopus, the chatbot didn’t push back (even though Harford made up the whole thing in a prompt).

Instead, ChatGPT leaned into the prompt, saying, “I owe you both an apology and an explanation — and possibly a towel.”

It continued with a very detailed justification for the mix-up, even hallucinating what had supposedly happened on the date (the octopus said it was the best date she had had in years).

The author argues that ChatGPT is nothing more than an improvisational partner, taking whatever is thrown at it and building upon it.

The danger, of course, is that while improv works in comedy, it doesn’t work in medicine, finance or any other real-life scenario where the stakes are high.

For instance, one man trusted ChatGPT for medical diagnoses and delayed going to the doctor because he had faith in the technology, only later to find out he had a potentially fatal illness, according to The Economic Times.

Who Owns the Mistakes?

When LLMs like ChatGPT spew inaccurate information that ultimately causes harm, who’s to blame?

Is it the companies that make the technology? The users who trust it? A mix of both? This accountability gap is what makes LLMs different from the search tools before them.

For instance, in Wikipedia, a missing citation is serious. In Google, spammy SEO content can be devalued by search engine algorithms.

But LLMs merge fact and fiction seamlessly with nothing more than a disclaimer in fine print that says they “can make mistakes.”

This is the makings of a perfect storm, and one of the reasons why there have been Senate hearings on AI regulation.

In those hearings, OpenAI CEO Sam Altman, IBM’s Christina Montgomery and AI critic Gary Marcus spoke about AI regulations to ensure safety while supporting responsible development.

Still, companies like OpenAI take no responsibility for mistakes. OpenAI has cautioned against using ChatGPT in lieu of professional advice.

The Cost of AI Dependence

Perhaps the more urgent question isn’t what AI can do for us, but what it’s doing to us, a concern raised in an article at The Guardian.

There’s already an abundance of research showing how it’s impacting human creativity and critical thinking. Here are two compelling studies:

- An MIT study divided participants into three groups and asked them to write SAT essays using either OpenAI’s ChatGPT, Google’s search engine or nothing at all. Researchers recorded brain activity and found that ChatGPT users had the lowest brain engagement and “consistently underperformed at neural, linguistic, and behavioral levels.”

- Microsoft Research and Carnegie Mellon University surveyed knowledge workers across industries to examine how the frequent use of generative AI affects problem-solving and critical thinking. While respondents reported greater efficiency and productivity when using AI, the findings show a trade-off. The reliance on AI correlated with reduced analytical reasoning and less ability to solve problems without AI support.

While AI may boost efficiency in the short term, its long-term cost may be the quiet erosion of the skills that make us human.

Drowning in AI Slop

What happens when people’s most-trusted source of information — a search engine — is now run by LLMs?

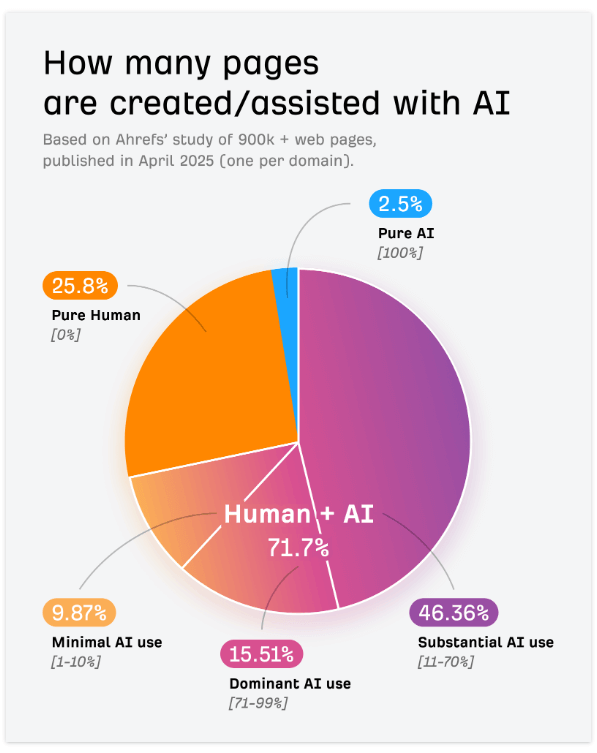

This is not science fiction. By some accounts, 74% of web pages already contain AI-generated content, according to an Ahrefs study.

And if nothing changes, that percentage will only grow.

This is a problem, especially when research shows that humans are not good at detecting the difference between AI content and human-generated content.

Then there’s the rise of digital slop. An author at The Guardian calls this a cultural erosion that is “slowly killing the internet,” where “content created by real-life human beings is becoming something of a novelty these days.”

Soon, the search results may be nothing more than an echo chamber of AI thinking: AI copying AI until the human equation disappears entirely. (One study found 10% of AI Overviews citations were AI-generated.)

The once-trusted, go-to source for information spanning generations may lose its authority.

So what are the search engines doing about it?

Well, Google rolled out algorithmic signals and spam policies targeting unhelpful AI-generated content — and updated its Search Quality Rater Guidelines to discuss generative AI.

But so far, this feels more like patchwork than a plan.

The Trade-Off We Can’t Ignore

As authority shifts from things like human experts to machines, the real danger isn’t just in a wrong answer, but in the habits we form when we stop questioning them.

The pressing question is how much of ourselves (our judgment, our critical thinking, our willingness to doubt) are we willing to hand over for an instant answer?

Time will tell if regulators and corporations will begin to police the outputs. Until then, we all need to stay vigilant to recognize the shortcomings of our reliance on this new technology.

Let’s discuss how we can help you achieve your goals with AI SEO while preserving content quality:

Contact Us Today for a Consultation!

Quick Solutions

- How do I recognize when AI-generated content may be inaccurate and ensure that I rely on trustworthy sources?

- How do I ensure that I use AI as a tool to enhance creativity and problem-solving instead of replacing critical thinking?

FAQ: How do I verify AI-generated content for accuracy before using or publishing it publicly?

Everyone today should learn how to identify and fact-check AI-generated information, especially when AI systems can produce incorrect or even made-up data (“hallucinations”).

One of the first steps you can take is to identify the source of the information and determine its credibility. For example, if the AI cites sources, those references should be cross-checked.

This also includes any numerical data or statistics it cites to ensure they are up-to-date and taken in context.

Also knowing that the training data used by the AI can be rooted in potential biases can help you better assess the reliability.

When you follow these steps, you are on your way to being able to better discern AI results.

Action Plan

- Identify the AI-generated content that needs verification.

- Check if the AI has cited any sources or provided evidence for its claims, and read through the sources.

- Cross-reference any of the citations and compare the information with multiple credible sources (academic journals, etc.).

- Verify any numerical data and statistics given with the official reports or industry publications.

- Use AI detection tools to determine if the content was AI-generated, but know that not all AI detectors are accurate and there is some controversy around these tools.

- Think about the content in the context of your own experiences or knowledge to assess its validity.

- Train your team on common issues with AI content and how to address them, and you can even assign representatives to oversee the verification process at scale.

- Develop a workflow for evaluating AI-generated content and document any discrepancies or errors found during the review process.

- Keep up-to-date with developments in AI technology and its implications, for instance, staying informed about advancements in AI detection as well as monitoring updates by the AI developers.

- Learn from ethicists to understand the societal impact of AI-generated content.

- Share any best practices or your findings with colleagues, stakeholders, friends or the community.

- Continuously refine your AI verification process to adapt to evolving AI tools.

About Us

Bruce Clay, Inc. has been a pioneer in the field of SEO since 1996. With decades of experience, we specialize in SEO, PPC and content optimization strategies that drive results. Our team helps businesses thrive in the evolving AI landscape. Learn more about our history and achievements on our About Us page.

26,000+ professionals, marketers and SEOs read the Bruce Clay Blog

Subscribe now for free to get:

- Expert SEO insights from the "Father of SEO."

- Proven SEO strategies to optimize website performance.

- SEO advice to earn more website traffic, higher search ranking and increased revenue.

5 Replies to “When the Answer Is Wrong: What We Risk When We Stop Questioning”

If we don’t continually question things we’re told and accept answers as facts without thinking critically about them, then we are more likely to accept incorrect facts as true. Critical thinking has been one of the most valuable things I’ve learned since becoming a student. When I receive an incorrect answer but do not verify its correctness or accuracy, my ability to learn is greatly diminished.

Interesting perspective. It’s a good reminder that progress often depends on continuing to question assumptions rather than accepting the first answer we get, since curiosity and critical thinking are what drive better ideas and deeper understanding.

Your point about the importance of curiosity and critical thinking really stands out. In digital marketing and SEO, questioning data, strategies, and even search results is essential for making better decisions

This is such a timely reminder that we can’t just go on autopilot with the answers we find online. It’s wild how easily a confident-sounding answer can override our own common sense. This is a great reminder to keep our “questioning” muscles active instead of going on autopilot.

LEAVE A REPLY