#Pubcon Liveblog: How SEOs Can Deal With Algorithm Chaos

These three speakers (Jake Bohall, Bill Hartzer and William Atchison) will sort through issues of the volatile algorithm with the aim of educating and making us less vulnerable to the constant change.

Quality Content and Quality Links for Algo Chaos Aversion

Jake Bohall @jakebohall, Virante, Inc.

Chaos Theory:

Sensitivity to initial conditions

- Site structure

- Search queries

- Incoming links

Topological mixing

- Inbound links

- Social signals

- Content

In 2011, Eric Schmidt testifying in front of Congress said there were more than 500 changes to the Google algo. This graph shows just eight named changes. All the changes we don’t even know about happening behind the scenes have an effect on what SEOs do.

We’ve also seen negative SEO rising. Matt Cutts has said that doesn’t happen and it doesn’t have any noticeable effect, but Jake sees a lot of it happening all the time because they’re digging into link cleanup efforts.

Inconsistency with Guidelines: Google has clear guidelines that instruct webmasters to avoid tricks intended to improve search engine ranking including any links intended to manipulate PageRank or a site’s ranking in Google results. Even “natural” links would be considered a way to improve your ranking, so there’s some inconsistency there.

So what’s an SEO to do?

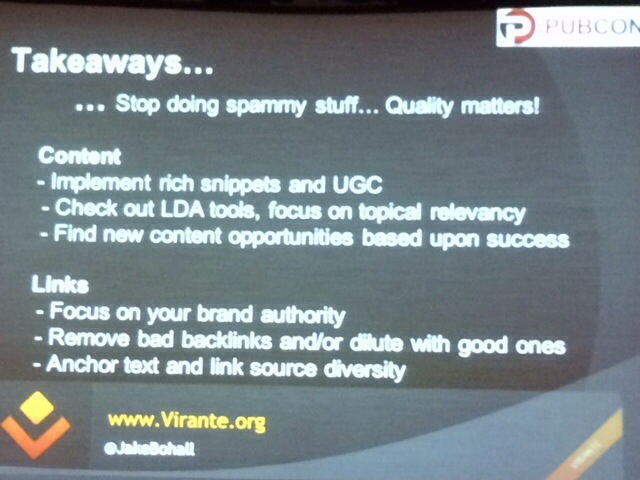

Quality content matters.

Unique content: No copying manufacturer content, rich snippets and Schema.org article markup (recently announced as having an impact on in-depth articles), user generated content. Your content should prove your users are engaged. FAQ content that is added to and answered as in-depth as possible.

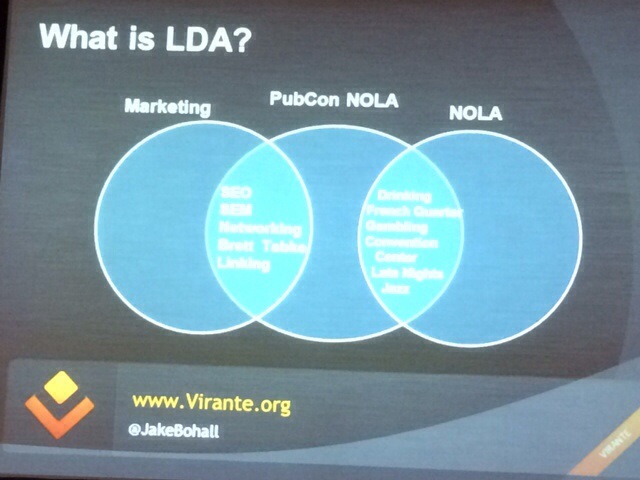

Relevant content: New trends (topical relevancy). LDA scoring and keyword concept themes to create content that is more semantically relevant to the keywords you are trying to rank.

Use topically relevant phrases to build a theme.

Authority content: Google+ is a trend worth pursuing. “It’s really the unification of all of Google’s services, with a common social layer.” —Vic Gundotra, Head of Google+. Create a Google+ account, implement rel=author and rel=publisher to attribute your Knowledge Graph to your profile and brand pages.

Quality links matter.

Better links come from SEO transitioning to PR; care about your company. Digital outreach + PR + social media >> converge to promote your brand.

Diversify your links. Low volume of different sources of links. Proactively prune bad links. Diversified anchor text is important. RemoveEm.com/ratios.php will give you an idea if you have links to be concerned about.

Broken link/better link building (BLB) is prospecting for content ideas that already have links. Find out who is/was linking to what content in the past. Replace lost or abandoned content webmasters want to link to.

Check out all of Bohall’s slides below:

Recent Major Google Algo Updates

Bill Hartzer @bhartzer, Globe Runner

Google Panda

Google’s ranking factor for identifying low quality pages. It’s been integrated into the algorithm and updates every few months. Panda was developed with human testers rating quality, design, trustworthiness and speed indicators and likeliness to return to a site. It was also launched with info provided through a Google Chrome site blocker extension. Panda launched February 2011 and the latest known manual update Panda 25 was March 14, 2013. Panda updates on a rolling update schedule now updated every month. We expect the soft Panda update as announced at SMX West last week coming soon.

The easiest way to find out if you were hit by Panda is to look at your web analytics to see any traffic losses that align with known dates of update.

Recovering from Google Panda:

- Make sure all content on site is high quality.

- Use these 23 questions to assess quality as shared by Google

Google Penguin

An algorithm change targeted at webspam to decrease rankings for sites that violate Google quality guideline. Examples are keyword stuffing, over optimization and linking. It was first reported in April 2012 with the last major announced update in October 2013. Matt Cutts has said it’s on a 6 month update schedule, in which case we’re due for an update.

To find out if you were hit by Google Penguin, again look at your web analytics for traffic drops aligned with update dates.

Recovering from Google Penguin:

- Perform a full SEO audit of website

- Review GWT for messages and suggestions

- Perform a full link analysis of site, with Majestic SEO, a hrefs, etc.

- Disavow links to site, force crawls of disavowed links

- Work on authority and trust of site

- Wait for next Penguin update

Other Major Updates

- EMD Exact Match Domain Update: Updated in September of 2012 targeting commercial phrases (Adwords CPC cost + # searches per month = commercial phrase). Recover by cleaning up your link profile, especially anchor text.

- Page Layout Update

- Knowledge Graph Update: Tip: get listed in Freebase.com if you can’t get in Wikipedia

Manual Actions

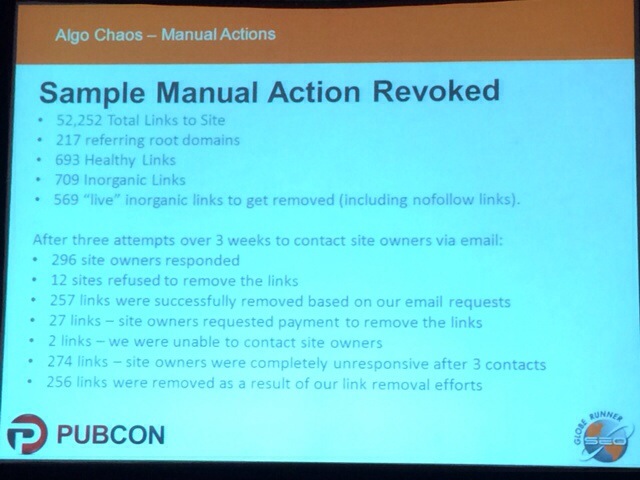

Reported in Google Webmaster Tools. Partial match (affects certain pages) and full match (affects whole site) and unnatural links (require link cleanup and resubmission) are common reports.

To get manual actions revoked, identify all links to site, manually review links, identify links to remove, contact site owners, document emails and contact dates, make 3 attempts to contact site owners, and disavow links not removed. Then request review with letter and spreadsheet with data proof.

Future Google Algo Updates

- Google Panda Update is coming and expected to be softer on small businesses.

- Panda Updates are monthly. Penguin updates are 6 months between rollouts.

- Manual actions: severity of infraction can affect length of time a site will be penalized. Average 6-12 months to lift manual action.

Proactive Content Management to Avoid Algo Chaos

William Atchison @IncrediBILL, Internet Marketing Ninjas

There’s a problem and that’s things out of your control. Third-party sites have negative impact. RSS aggregators, scrapers and spun content will rank your content against our site. Links from bad neighborhoods can damage your search results. Search engines can still be tricked into allowing your site to get hijacked, although it’s more rare.

Some solutions include: search engines have now allowed sites to validate spiders by doing full-round DNS validation; Google Authorship allows you to claim your content.

Block scrapers to:

- Avoid bad links and avoid the need to disavow those links

- Avoid duplicate content and filing DMCA complaints

- Avoid brand dilution and customer confusion

- Avoid other search engine pitfalls

Limit RSS feeds:

- Provide minimal feeds only

- Providing full feeds to make it easy for RSS readers also makes it easy for aggregators and scrapers

- Weigh convenience over search engine ranking

Bad links:

- Random spiders and scrapers are bad link factories

- Without blocking the source of the problem, disavow is just a Google version of whack-a-mole

There’s brand and reputation damage that’s caused by scrapers. It confuses customers if they see your content on other sites. If your scraped content is distributed with another site’s shady ads, those shady ads can reflect poorly on your brand.

- Whitelist access to just the countries you service, if possible

- Validate search engine IPs to avoid 3rd-party scrapers

- Don’t let search engines or other sites cache or archive your content to avoid your content from getting out of your control

- Specify allowed spiders in robots.txt and disallow all others

26,000+ professionals, marketers and SEOs read the Bruce Clay Blog

Subscribe now for free to get:

- Expert SEO insights from the "Father of SEO."

- Proven SEO strategies to optimize website performance.

- SEO advice to earn more website traffic, higher search ranking and increased revenue.

LEAVE A REPLY