#Pubcon Liveblog: SEO 2014 – Bruce Clay

Our own Bruce Clay, president of the org that publishes this fine blog, lays a roadmap for search engine optimization in the coming year. The SMX West conference last week and comments made there by Google employees (at Meet the Search Engines and Amit Singhal’s Keynote) are the source of this presentation.

|

Bruce has been performing search engine optimization since 1996 and has watched SEO techniques and strategies evolve over the last 2 decades. He wrote the book on SEO — “SEO All-In-One for Dummies,” which covers time-tested algorithm-proof optimization methodology.

Hummingbird

Hummingbird was the first major update to the Google infrastructure since 2000. The changes were done to speed up the index and ranking system. It’s also one of the first main updates to aid voice search and natural language processing. Hummingbird is a translation layer that tries to figure out when you’re talking, what does that mean.

Knowledge Graph

According to Google it’s another tool in the Swiss Army Knife. While Google says that this improved search experience will help the whole web economy, it’s taking away traffic to our websites. While it may make Google more attractive, it makes our websites less attractive. Still, we have to embrace it in order to rank.

Penalties Galore

- There will be a softer Panda that is kinder to small business websites in 2014.

- Link penalties can transfer via duplicate content

- Reconsideration is all about trust. Time doesn’t matter to a penalty; it remains until your site is trusted

- Penalties also remain until Google refreshes the algorithm. Panda is monthly but Penguin was last updated in October 2013.

- Avoiding penalties is critical. Focus on quality content, usability (why users visit), conversion, traffic links and SEO best practices.

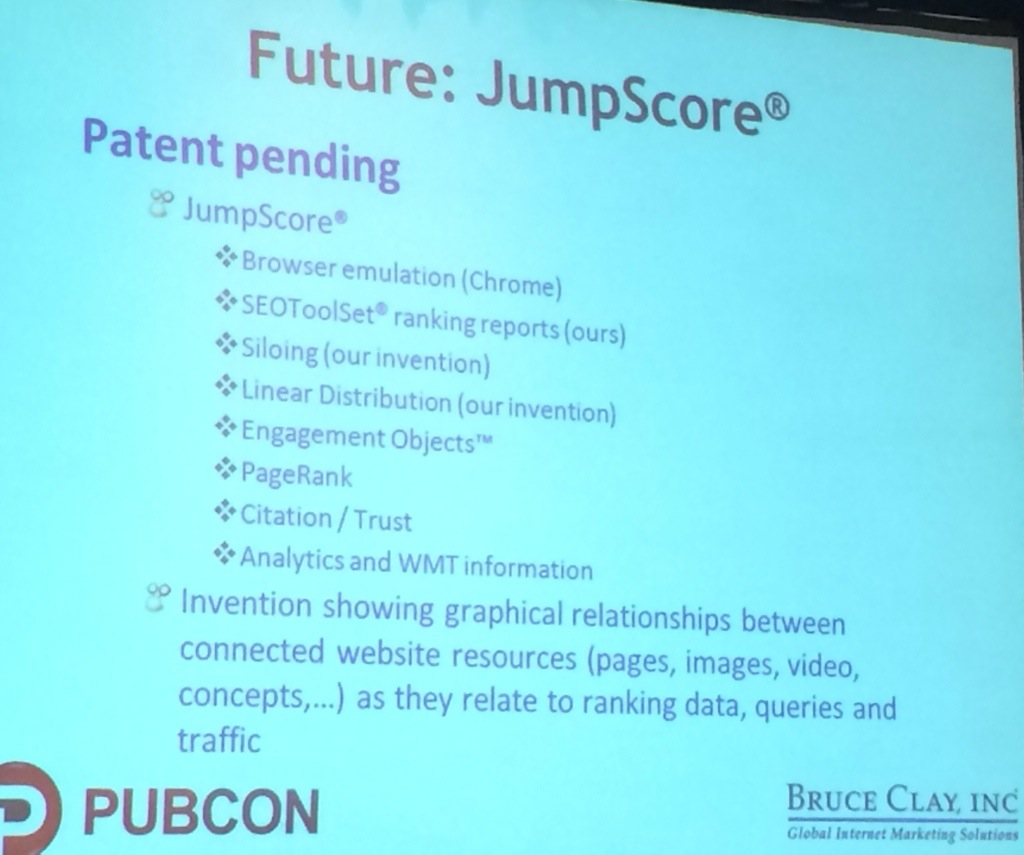

Future: JumpScore

Bruce Clay, Inc. has patented technology that views websites based on where this page is perceived and its overall importance in the web. This is going to be released in a month.

Mobile SEO

Searches on mobile are increasing. Responsive design is the preferred solution. Rankings shift based on the device a user is on. There will be more personalization due to device. The size of the page appears to have shifted to shorter pages ranking.

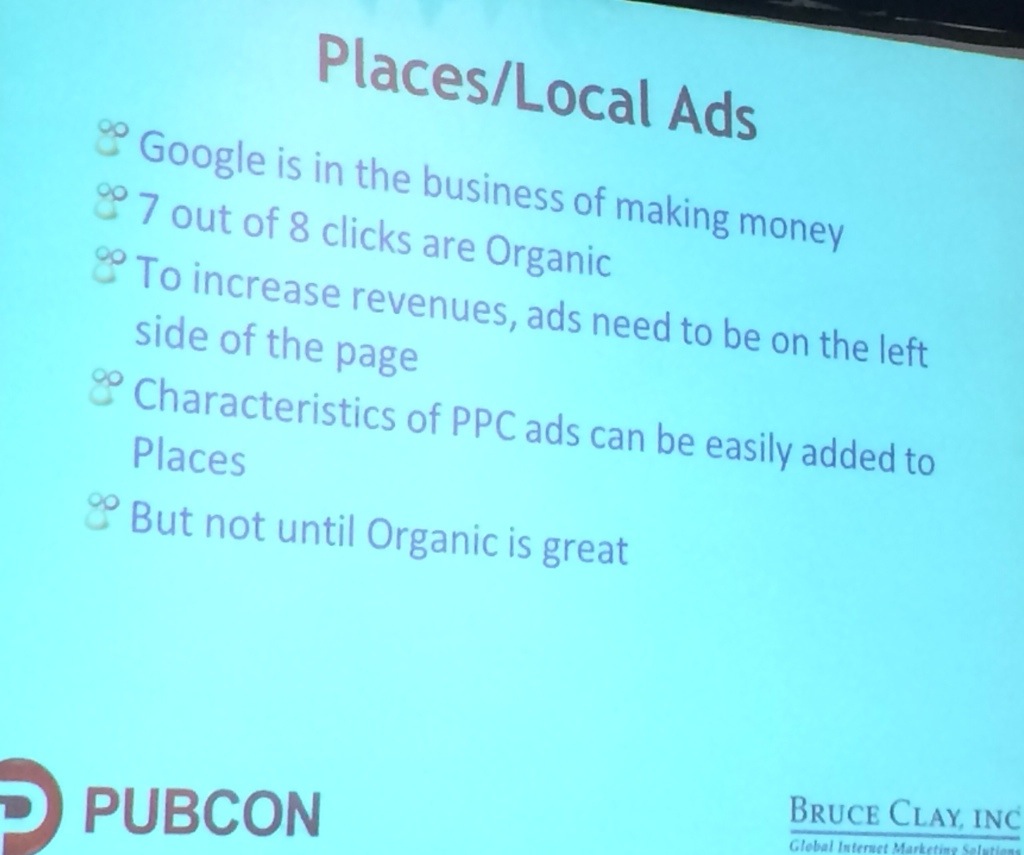

If you think about the new features of AdWords like click to call, and think about the implication of those features in Places, it makes sense that Google would want to implement these ad features in Places.

Organic Entries Per Page

- Expect as few as 5-7 blue links on a page (down from the original 10 organic links)

- If the top 5 results are highly relevant, there’s no reason for Google to include more than 5. With the rest of the space they can include rich media, video, images and news, plus monetized content.

Engagement Objects

Recent research shows a 71% increase in conversion from video on a page. That video can play from YouTube, show up in SERP and the click goes to your page (when you include the video in your site’s Video XML).

Noteworthy Issues

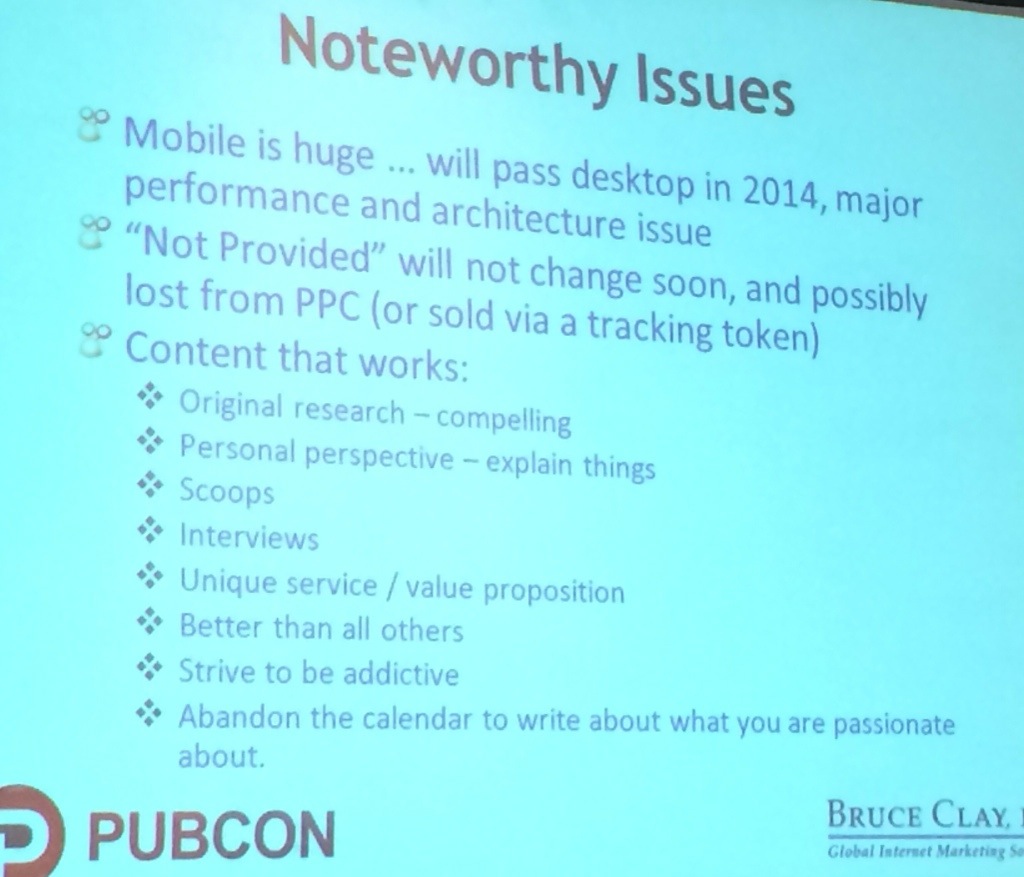

- Mobile is huge and will pass desktop this year. It has big implications for performance and architecture as ranking factors.

- At SMX West it came out that keywords “not provided” for organic search traffic will not be coming back any time soon and possibly keyword referral data from PPC will be lost soon.

- Content that works (according to Matt Cutts)

- Blogs: consider links and shares per post over posts per week as the metric of success

- Authorship is important. Google remembers the author of a post and keeps that authority ranking with the post even if the author of the blog changes.

- Pagination must work properly and not return a 404

- Internal search results pages should be ignored or dropped instead of indexing

To sum it up, SEO in 2014 is attention to detail and playing by the book for things that search engines like.

Q&A

Q: The more ads that are added to SERP pages, the more users are turning to page 2 for results. Have you seen that?

A: Google will address it one way or another, but I haven’t heard that page 2 is getting more traffic. If it is the case, I would expect it to be limited to above the fold.

Q: Penalties following duplicate content, is that happening now or what Google aspires to?

A: According to Matt Cutts, that’s how the penalty works. Here’s a workaround he suggests: noindexing the copy site, then deleting the original site and waiting for that site to be removed from the Google index, and then allowing the copied site to be indexed.

26,000+ professionals, marketers and SEOs read the Bruce Clay Blog

Subscribe now for free to get:

- Expert SEO insights from the "Father of SEO."

- Proven SEO strategies to optimize website performance.

- SEO advice to earn more website traffic, higher search ranking and increased revenue.

3 Replies to “#Pubcon Liveblog: SEO 2014 – Bruce Clay”

Oscar, no doubt Google’s having trouble delivering the true author of scraped content. *sigh*

I think Google is still having problems working around the duplicate content thingy. Imagine if you’ve just posted a really good unique content and some site scrapes it off. The problem happens if the site that copied your content is crawled by Google first. will that situation then tell Google that you’re not the original author of the article?

LEAVE A REPLY